An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Springer Nature - PMC COVID-19 Collection

Fake news, disinformation and misinformation in social media: a review

Esma aïmeur.

Department of Computer Science and Operations Research (DIRO), University of Montreal, Montreal, Canada

Sabrine Amri

Gilles brassard, associated data.

All the data and material are available in the papers cited in the references.

Online social networks (OSNs) are rapidly growing and have become a huge source of all kinds of global and local news for millions of users. However, OSNs are a double-edged sword. Although the great advantages they offer such as unlimited easy communication and instant news and information, they can also have many disadvantages and issues. One of their major challenging issues is the spread of fake news. Fake news identification is still a complex unresolved issue. Furthermore, fake news detection on OSNs presents unique characteristics and challenges that make finding a solution anything but trivial. On the other hand, artificial intelligence (AI) approaches are still incapable of overcoming this challenging problem. To make matters worse, AI techniques such as machine learning and deep learning are leveraged to deceive people by creating and disseminating fake content. Consequently, automatic fake news detection remains a huge challenge, primarily because the content is designed in a way to closely resemble the truth, and it is often hard to determine its veracity by AI alone without additional information from third parties. This work aims to provide a comprehensive and systematic review of fake news research as well as a fundamental review of existing approaches used to detect and prevent fake news from spreading via OSNs. We present the research problem and the existing challenges, discuss the state of the art in existing approaches for fake news detection, and point out the future research directions in tackling the challenges.

Introduction

Context and motivation.

Fake news, disinformation and misinformation have become such a scourge that Marcia McNutt, president of the National Academy of Sciences of the United States, is quoted to have said (making an implicit reference to the COVID-19 pandemic) “Misinformation is worse than an epidemic: It spreads at the speed of light throughout the globe and can prove deadly when it reinforces misplaced personal bias against all trustworthy evidence” in a joint statement of the National Academies 1 posted on July 15, 2021. Indeed, although online social networks (OSNs), also called social media, have improved the ease with which real-time information is broadcast; its popularity and its massive use have expanded the spread of fake news by increasing the speed and scope at which it can spread. Fake news may refer to the manipulation of information that can be carried out through the production of false information, or the distortion of true information. However, that does not mean that this problem is only created with social media. A long time ago, there were rumors in the traditional media that Elvis was not dead, 2 that the Earth was flat, 3 that aliens had invaded us, 4 , etc.

Therefore, social media has become nowadays a powerful source for fake news dissemination (Sharma et al. 2019 ; Shu et al. 2017 ). According to Pew Research Center’s analysis of the news use across social media platforms, in 2020, about half of American adults get news on social media at least sometimes, 5 while in 2018, only one-fifth of them say they often get news via social media. 6

Hence, fake news can have a significant impact on society as manipulated and false content is easier to generate and harder to detect (Kumar and Shah 2018 ) and as disinformation actors change their tactics (Kumar and Shah 2018 ; Micallef et al. 2020 ). In 2017, Snow predicted in the MIT Technology Review (Snow 2017 ) that most individuals in mature economies will consume more false than valid information by 2022.

Recent news on the COVID-19 pandemic, which has flooded the web and created panic in many countries, has been reported as fake. 7 For example, holding your breath for ten seconds to one minute is not a self-test for COVID-19 8 (see Fig. 1 ). Similarly, online posts claiming to reveal various “cures” for COVID-19 such as eating boiled garlic or drinking chlorine dioxide (which is an industrial bleach), were verified 9 as fake and in some cases as dangerous and will never cure the infection.

Fake news example about a self-test for COVID-19 source: https://cdn.factcheck.org/UploadedFiles/Screenshot031120_false.jpg , last access date: 26-12-2022

Social media outperformed television as the major news source for young people of the UK and the USA. 10 Moreover, as it is easier to generate and disseminate news online than with traditional media or face to face, large volumes of fake news are produced online for many reasons (Shu et al. 2017 ). Furthermore, it has been reported in a previous study about the spread of online news on Twitter (Vosoughi et al. 2018 ) that the spread of false news online is six times faster than truthful content and that 70% of the users could not distinguish real from fake news (Vosoughi et al. 2018 ) due to the attraction of the novelty of the latter (Bovet and Makse 2019 ). It was determined that falsehood spreads significantly farther, faster, deeper and more broadly than the truth in all categories of information, and the effects are more pronounced for false political news than for false news about terrorism, natural disasters, science, urban legends, or financial information (Vosoughi et al. 2018 ).

Over 1 million tweets were estimated to be related to fake news by the end of the 2016 US presidential election. 11 In 2017, in Germany, a government spokesman affirmed: “We are dealing with a phenomenon of a dimension that we have not seen before,” referring to an unprecedented spread of fake news on social networks. 12 Given the strength of this new phenomenon, fake news has been chosen as the word of the year by the Macquarie dictionary both in 2016 13 and in 2018 14 as well as by the Collins dictionary in 2017. 15 , 16 Since 2020, the new term “infodemic” was coined, reflecting widespread researchers’ concern (Gupta et al. 2022 ; Apuke and Omar 2021 ; Sharma et al. 2020 ; Hartley and Vu 2020 ; Micallef et al. 2020 ) about the proliferation of misinformation linked to the COVID-19 pandemic.

The Gartner Group’s top strategic predictions for 2018 and beyond included the need for IT leaders to quickly develop Artificial Intelligence (AI) algorithms to address counterfeit reality and fake news. 17 However, fake news identification is a complex issue. (Snow 2017 ) questioned the ability of AI to win the war against fake news. Similarly, other researchers concurred that even the best AI for spotting fake news is still ineffective. 18 Besides, recent studies have shown that the power of AI algorithms for identifying fake news is lower than its ability to create it Paschen ( 2019 ). Consequently, automatic fake news detection remains a huge challenge, primarily because the content is designed to closely resemble the truth in order to deceive users, and as a result, it is often hard to determine its veracity by AI alone. Therefore, it is crucial to consider more effective approaches to solve the problem of fake news in social media.

Contribution

The fake news problem has been addressed by researchers from various perspectives related to different topics. These topics include, but are not restricted to, social science studies , which investigate why and who falls for fake news (Altay et al. 2022 ; Batailler et al. 2022 ; Sterret et al. 2018 ; Badawy et al. 2019 ; Pennycook and Rand 2020 ; Weiss et al. 2020 ; Guadagno and Guttieri 2021 ), whom to trust and how perceptions of misinformation and disinformation relate to media trust and media consumption patterns (Hameleers et al. 2022 ), how fake news differs from personal lies (Chiu and Oh 2021 ; Escolà-Gascón 2021 ), examine how can the law regulate digital disinformation and how governments can regulate the values of social media companies that themselves regulate disinformation spread on their platforms (Marsden et al. 2020 ; Schuyler 2019 ; Vasu et al. 2018 ; Burshtein 2017 ; Waldman 2017 ; Alemanno 2018 ; Verstraete et al. 2017 ), and argue the challenges to democracy (Jungherr and Schroeder 2021 ); Behavioral interventions studies , which examine what literacy ideas mean in the age of dis/mis- and malinformation (Carmi et al. 2020 ), investigate whether media literacy helps identification of fake news (Jones-Jang et al. 2021 ) and attempt to improve people’s news literacy (Apuke et al. 2022 ; Dame Adjin-Tettey 2022 ; Hameleers 2022 ; Nagel 2022 ; Jones-Jang et al. 2021 ; Mihailidis and Viotty 2017 ; García et al. 2020 ) by encouraging people to pause to assess credibility of headlines (Fazio 2020 ), promote civic online reasoning (McGrew 2020 ; McGrew et al. 2018 ) and critical thinking (Lutzke et al. 2019 ), together with evaluations of credibility indicators (Bhuiyan et al. 2020 ; Nygren et al. 2019 ; Shao et al. 2018a ; Pennycook et al. 2020a , b ; Clayton et al. 2020 ; Ozturk et al. 2015 ; Metzger et al. 2020 ; Sherman et al. 2020 ; Nekmat 2020 ; Brashier et al. 2021 ; Chung and Kim 2021 ; Lanius et al. 2021 ); as well as social media-driven studies , which investigate the effect of signals (e.g., sources) to detect and recognize fake news (Vraga and Bode 2017 ; Jakesch et al. 2019 ; Shen et al. 2019 ; Avram et al. 2020 ; Hameleers et al. 2020 ; Dias et al. 2020 ; Nyhan et al. 2020 ; Bode and Vraga 2015 ; Tsang 2020 ; Vishwakarma et al. 2019 ; Yavary et al. 2020 ) and investigate fake and reliable news sources using complex networks analysis based on search engine optimization metric (Mazzeo and Rapisarda 2022 ).

The impacts of fake news have reached various areas and disciplines beyond online social networks and society (García et al. 2020 ) such as economics (Clarke et al. 2020 ; Kogan et al. 2019 ; Goldstein and Yang 2019 ), psychology (Roozenbeek et al. 2020a ; Van der Linden and Roozenbeek 2020 ; Roozenbeek and van der Linden 2019 ), political science (Valenzuela et al. 2022 ; Bringula et al. 2022 ; Ricard and Medeiros 2020 ; Van der Linden et al. 2020 ; Allcott and Gentzkow 2017 ; Grinberg et al. 2019 ; Guess et al. 2019 ; Baptista and Gradim 2020 ), health science (Alonso-Galbán and Alemañy-Castilla 2022 ; Desai et al. 2022 ; Apuke and Omar 2021 ; Escolà-Gascón 2021 ; Wang et al. 2019c ; Hartley and Vu 2020 ; Micallef et al. 2020 ; Pennycook et al. 2020b ; Sharma et al. 2020 ; Roozenbeek et al. 2020b ), environmental science (e.g., climate change) (Treen et al. 2020 ; Lutzke et al. 2019 ; Lewandowsky 2020 ; Maertens et al. 2020 ), etc.

Interesting research has been carried out to review and study the fake news issue in online social networks. Some focus not only on fake news, but also distinguish between fake news and rumor (Bondielli and Marcelloni 2019 ; Meel and Vishwakarma 2020 ), while others tackle the whole problem, from characterization to processing techniques (Shu et al. 2017 ; Guo et al. 2020 ; Zhou and Zafarani 2020 ). However, they mostly focus on studying approaches from a machine learning perspective (Bondielli and Marcelloni 2019 ), data mining perspective (Shu et al. 2017 ), crowd intelligence perspective (Guo et al. 2020 ), or knowledge-based perspective (Zhou and Zafarani 2020 ). Furthermore, most of these studies ignore at least one of the mentioned perspectives, and in many cases, they do not cover other existing detection approaches using methods such as blockchain and fact-checking, as well as analysis on metrics used for Search Engine Optimization (Mazzeo and Rapisarda 2022 ). However, in our work and to the best of our knowledge, we cover all the approaches used for fake news detection. Indeed, we investigate the proposed solutions from broader perspectives (i.e., the detection techniques that are used, as well as the different aspects and types of the information used).

Therefore, in this paper, we are highly motivated by the following facts. First, fake news detection on social media is still in the early age of development, and many challenging issues remain that require deeper investigation. Hence, it is necessary to discuss potential research directions that can improve fake news detection and mitigation tasks. However, the dynamic nature of fake news propagation through social networks further complicates matters (Sharma et al. 2019 ). False information can easily reach and impact a large number of users in a short time (Friggeri et al. 2014 ; Qian et al. 2018 ). Moreover, fact-checking organizations cannot keep up with the dynamics of propagation as they require human verification, which can hold back a timely and cost-effective response (Kim et al. 2018 ; Ruchansky et al. 2017 ; Shu et al. 2018a ).

Our work focuses primarily on understanding the “fake news” problem, its related challenges and root causes, and reviewing automatic fake news detection and mitigation methods in online social networks as addressed by researchers. The main contributions that differentiate us from other works are summarized below:

- We present the general context from which the fake news problem emerged (i.e., online deception)

- We review existing definitions of fake news, identify the terms and features most commonly used to define fake news, and categorize related works accordingly.

- We propose a fake news typology classification based on the various categorizations of fake news reported in the literature.

- We point out the most challenging factors preventing researchers from proposing highly effective solutions for automatic fake news detection in social media.

- We highlight and classify representative studies in the domain of automatic fake news detection and mitigation on online social networks including the key methods and techniques used to generate detection models.

- We discuss the key shortcomings that may inhibit the effectiveness of the proposed fake news detection methods in online social networks.

- We provide recommendations that can help address these shortcomings and improve the quality of research in this domain.

The rest of this article is organized as follows. We explain the methodology with which the studied references are collected and selected in Sect. 2 . We introduce the online deception problem in Sect. 3 . We highlight the modern-day problem of fake news in Sect. 4 , followed by challenges facing fake news detection and mitigation tasks in Sect. 5 . We provide a comprehensive literature review of the most relevant scholarly works on fake news detection in Sect. 6 . We provide a critical discussion and recommendations that may fill some of the gaps we have identified, as well as a classification of the reviewed automatic fake news detection approaches, in Sect. 7 . Finally, we provide a conclusion and propose some future directions in Sect. 8 .

Review methodology

This section introduces the systematic review methodology on which we relied to perform our study. We start with the formulation of the research questions, which allowed us to select the relevant research literature. Then, we provide the different sources of information together with the search and inclusion/exclusion criteria we used to select the final set of papers.

Research questions formulation

The research scope, research questions, and inclusion/exclusion criteria were established following an initial evaluation of the literature and the following research questions were formulated and addressed.

- RQ1: what is fake news in social media, how is it defined in the literature, what are its related concepts, and the different types of it?

- RQ2: What are the existing challenges and issues related to fake news?

- RQ3: What are the available techniques used to perform fake news detection in social media?

Sources of information

We broadly searched for journal and conference research articles, books, and magazines as a source of data to extract relevant articles. We used the main sources of scientific databases and digital libraries in our search, such as Google Scholar, 19 IEEE Xplore, 20 Springer Link, 21 ScienceDirect, 22 Scopus, 23 ACM Digital Library. 24 Also, we screened most of the related high-profile conferences such as WWW, SIGKDD, VLDB, ICDE and so on to find out the recent work.

Search criteria

We focused our research over a period of ten years, but we made sure that about two-thirds of the research papers that we considered were published in or after 2019. Additionally, we defined a set of keywords to search the above-mentioned scientific databases since we concentrated on reviewing the current state of the art in addition to the challenges and the future direction. The set of keywords includes the following terms: fake news, disinformation, misinformation, information disorder, social media, detection techniques, detection methods, survey, literature review.

Study selection, exclusion and inclusion criteria

To retrieve relevant research articles, based on our sources of information and search criteria, a systematic keyword-based search was carried out by posing different search queries, as shown in Table 1 .

List of keywords for searching relevant articles

We discovered a primary list of articles. On the obtained initial list of studies, we applied a set of inclusion/exclusion criteria presented in Table 2 to select the appropriate research papers. The inclusion and exclusion principles are applied to determine whether a study should be included or not.

Inclusion and exclusion criteria

After reading the abstract, we excluded some articles that did not meet our criteria. We chose the most important research to help us understand the field. We reviewed the articles completely and found only 61 research papers that discuss the definition of the term fake news and its related concepts (see Table 4 ). We used the remaining papers to understand the field, reveal the challenges, review the detection techniques, and discuss future directions.

Classification of fake news definitions based on the used term and features

A brief introduction of online deception

The Cambridge Online Dictionary defines Deception as “ the act of hiding the truth, especially to get an advantage .” Deception relies on peoples’ trust, doubt and strong emotions that may prevent them from thinking and acting clearly (Aïmeur et al. 2018 ). We also define it in previous work (Aïmeur et al. 2018 ) as the process that undermines the ability to consciously make decisions and take convenient actions, following personal values and boundaries. In other words, deception gets people to do things they would not otherwise do. In the context of online deception, several factors need to be considered: the deceiver, the purpose or aim of the deception, the social media service, the deception technique and the potential target (Aïmeur et al. 2018 ; Hage et al. 2021 ).

Researchers are working on developing new ways to protect users and prevent online deception (Aïmeur et al. 2018 ). Due to the sophistication of attacks, this is a complex task. Hence, malicious attackers are using more complex tools and strategies to deceive users. Furthermore, the way information is organized and exchanged in social media may lead to exposing OSN users to many risks (Aïmeur et al. 2013 ).

In fact, this field is one of the recent research areas that need collaborative efforts of multidisciplinary practices such as psychology, sociology, journalism, computer science as well as cyber-security and digital marketing (which are not yet well explored in the field of dis/mis/malinformation but relevant for future research). Moreover, Ismailov et al. ( 2020 ) analyzed the main causes that could be responsible for the efficiency gap between laboratory results and real-world implementations.

In this paper, it is not in our scope of work to review online deception state of the art. However, we think it is crucial to note that fake news, misinformation and disinformation are indeed parts of the larger landscape of online deception (Hage et al. 2021 ).

Fake news, the modern-day problem

Fake news has existed for a very long time, much before their wide circulation became facilitated by the invention of the printing press. 25 For instance, Socrates was condemned to death more than twenty-five hundred years ago under the fake news that he was guilty of impiety against the pantheon of Athens and corruption of the youth. 26 A Google Trends Analysis of the term “fake news” reveals an explosion in popularity around the time of the 2016 US presidential election. 27 Fake news detection is a problem that has recently been addressed by numerous organizations, including the European Union 28 and NATO. 29

In this section, we first overview the fake news definitions as they were provided in the literature. We identify the terms and features used in the definitions, and we classify the latter based on them. Then, we provide a fake news typology based on distinct categorizations that we propose, and we define and compare the most cited forms of one specific fake news category (i.e., the intent-based fake news category).

Definitions of fake news

“Fake news” is defined in the Collins English Dictionary as false and often sensational information disseminated under the guise of news reporting, 30 yet the term has evolved over time and has become synonymous with the spread of false information (Cooke 2017 ).

The first definition of the term fake news was provided by Allcott and Gentzkow ( 2017 ) as news articles that are intentionally and verifiably false and could mislead readers. Then, other definitions were provided in the literature, but they all agree on the authenticity of fake news to be false (i.e., being non-factual). However, they disagree on the inclusion and exclusion of some related concepts such as satire , rumors , conspiracy theories , misinformation and hoaxes from the given definition. More recently, Nakov ( 2020 ) reported that the term fake news started to mean different things to different people, and for some politicians, it even means “news that I do not like.”

Hence, there is still no agreed definition of the term “fake news.” Moreover, we can find many terms and concepts in the literature that refer to fake news (Van der Linden et al. 2020 ; Molina et al. 2021 ) (Abu Arqoub et al. 2022 ; Allen et al. 2020 ; Allcott and Gentzkow 2017 ; Shu et al. 2017 ; Sharma et al. 2019 ; Zhou and Zafarani 2020 ; Zhang and Ghorbani 2020 ; Conroy et al. 2015 ; Celliers and Hattingh 2020 ; Nakov 2020 ; Shu et al. 2020c ; Jin et al. 2016 ; Rubin et al. 2016 ; Balmas 2014 ; Brewer et al. 2013 ; Egelhofer and Lecheler 2019 ; Mustafaraj and Metaxas 2017 ; Klein and Wueller 2017 ; Potthast et al. 2017 ; Lazer et al. 2018 ; Weiss et al. 2020 ; Tandoc Jr et al. 2021 ; Guadagno and Guttieri 2021 ), disinformation (Kapantai et al. 2021 ; Shu et al. 2020a , c ; Kumar et al. 2016 ; Bhattacharjee et al. 2020 ; Marsden et al. 2020 ; Jungherr and Schroeder 2021 ; Starbird et al. 2019 ; Ireton and Posetti 2018 ), misinformation (Wu et al. 2019 ; Shu et al. 2020c ; Shao et al. 2016 , 2018b ; Pennycook and Rand 2019 ; Micallef et al. 2020 ), malinformation (Dame Adjin-Tettey 2022 ) (Carmi et al. 2020 ; Shu et al. 2020c ), false information (Kumar and Shah 2018 ; Guo et al. 2020 ; Habib et al. 2019 ), information disorder (Shu et al. 2020c ; Wardle and Derakhshan 2017 ; Wardle 2018 ; Derakhshan and Wardle 2017 ), information warfare (Guadagno and Guttieri 2021 ) and information pollution (Meel and Vishwakarma 2020 ).

There is also a remarkable amount of disagreement over the classification of the term fake news in the research literature, as well as in policy (de Cock Buning 2018 ; ERGA 2018 , 2021 ). Some consider fake news as a type of misinformation (Allen et al. 2020 ; Singh et al. 2021 ; Ha et al. 2021 ; Pennycook and Rand 2019 ; Shao et al. 2018b ; Di Domenico et al. 2021 ; Sharma et al. 2019 ; Celliers and Hattingh 2020 ; Klein and Wueller 2017 ; Potthast et al. 2017 ; Islam et al. 2020 ), others consider it as a type of disinformation (de Cock Buning 2018 ) (Bringula et al. 2022 ; Baptista and Gradim 2022 ; Tsang 2020 ; Tandoc Jr et al. 2021 ; Bastick 2021 ; Khan et al. 2019 ; Shu et al. 2017 ; Nakov 2020 ; Shu et al. 2020c ; Egelhofer and Lecheler 2019 ), while others associate the term with both disinformation and misinformation (Wu et al. 2022 ; Dame Adjin-Tettey 2022 ; Hameleers et al. 2022 ; Carmi et al. 2020 ; Allcott and Gentzkow 2017 ; Zhang and Ghorbani 2020 ; Potthast et al. 2017 ; Weiss et al. 2020 ; Tandoc Jr et al. 2021 ; Guadagno and Guttieri 2021 ). On the other hand, some prefer to differentiate fake news from both terms (ERGA 2018 ; Molina et al. 2021 ; ERGA 2021 ) (Zhou and Zafarani 2020 ; Jin et al. 2016 ; Rubin et al. 2016 ; Balmas 2014 ; Brewer et al. 2013 ).

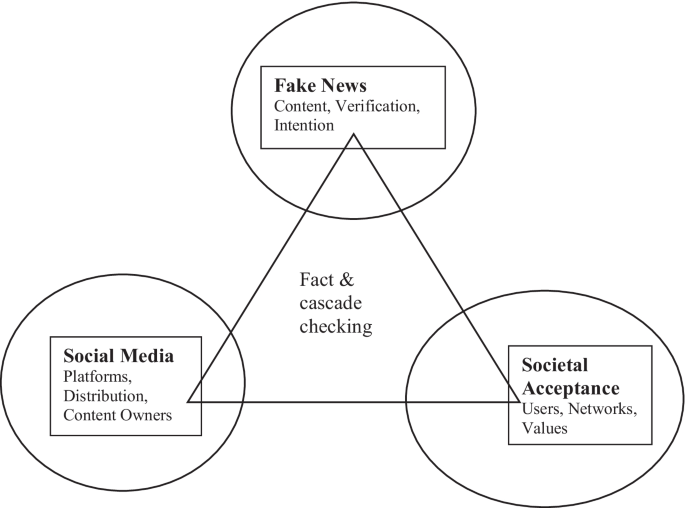

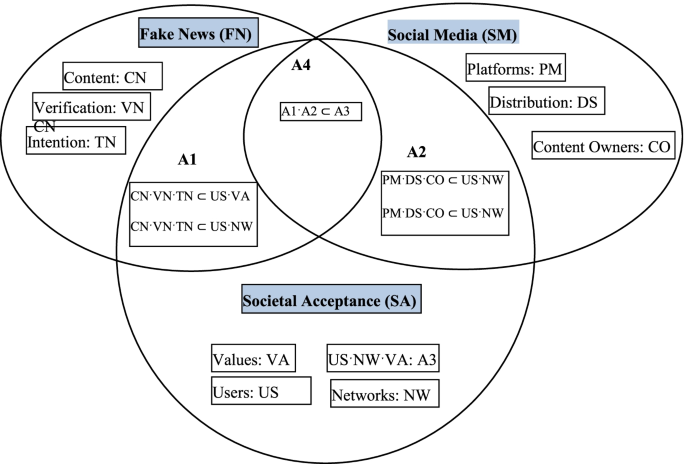

The existing terms can be separated into two groups. The first group represents the general terms, which are information disorder , false information and fake news , each of which includes a subset of terms from the second group. The second group represents the elementary terms, which are misinformation , disinformation and malinformation . The literature agrees on the definitions of the latter group, but there is still no agreed-upon definition of the first group. In Fig. 2 , we model the relationship between the most used terms in the literature.

Modeling of the relationship between terms related to fake news

The terms most used in the literature to refer, categorize and classify fake news can be summarized and defined as shown in Table 3 , in which we capture the similarities and show the differences between the different terms based on two common key features, which are the intent and the authenticity of the news content. The intent feature refers to the intention behind the term that is used (i.e., whether or not the purpose is to mislead or cause harm), whereas the authenticity feature refers to its factual aspect. (i.e., whether the content is verifiably false or not, which we label as genuine in the second case). Some of these terms are explicitly used to refer to fake news (i.e., disinformation, misinformation and false information), while others are not (i.e., malinformation). In the comparison table, the empty dash (–) cell denotes that the classification does not apply.

A comparison between used terms based on intent and authenticity

In Fig. 3 , we identify the different features used in the literature to define fake news (i.e., intent, authenticity and knowledge). Hence, some definitions are based on two key features, which are authenticity and intent (i.e., news articles that are intentionally and verifiably false and could mislead readers). However, other definitions are based on either authenticity or intent. Other researchers categorize false information on the web and social media based on its intent and knowledge (i.e., when there is a single ground truth). In Table 4 , we classify the existing fake news definitions based on the used term and the used features . In the classification, the references in the cells refer to the research study in which a fake news definition was provided, while the empty dash (–) cells denote that the classification does not apply.

The features used for fake news definition

Fake news typology

Various categorizations of fake news have been provided in the literature. We can distinguish two major categories of fake news based on the studied perspective (i.e., intention or content) as shown in Fig. 4 . However, our proposed fake news typology is not about detection methods, and it is not exclusive. Hence, a given category of fake news can be described based on both perspectives (i.e., intention and content) at the same time. For instance, satire (i.e., intent-based fake news) can contain text and/or multimedia content types of data (e.g., headline, body, image, video) (i.e., content-based fake news) and so on.

Most researchers classify fake news based on the intent (Collins et al. 2020 ; Bondielli and Marcelloni 2019 ; Zannettou et al. 2019 ; Kumar et al. 2016 ; Wardle 2017 ; Shu et al. 2017 ; Kumar and Shah 2018 ) (see Sect. 4.2.2 ). However, other researchers (Parikh and Atrey 2018 ; Fraga-Lamas and Fernández-Caramés 2020 ; Hasan and Salah 2019 ; Masciari et al. 2020 ; Bakdash et al. 2018 ; Elhadad et al. 2019 ; Yang et al. 2019b ) focus on the content to categorize types of fake news through distinguishing the different formats and content types of data in the news (e.g., text and/or multimedia).

Recently, another classification was proposed by Zhang and Ghorbani ( 2020 ). It is based on the combination of content and intent to categorize fake news. They distinguish physical news content and non-physical news content from fake news. Physical content consists of the carriers and format of the news, and non-physical content consists of the opinions, emotions, attitudes and sentiments that the news creators want to express.

Content-based fake news category

According to researchers of this category (Parikh and Atrey 2018 ; Fraga-Lamas and Fernández-Caramés 2020 ; Hasan and Salah 2019 ; Masciari et al. 2020 ; Bakdash et al. 2018 ; Elhadad et al. 2019 ; Yang et al. 2019b ), forms of fake news may include false text such as hyperlinks or embedded content; multimedia such as false videos (Demuyakor and Opata 2022 ), images (Masciari et al. 2020 ; Shen et al. 2019 ), audios (Demuyakor and Opata 2022 ) and so on. Moreover, we can also find multimodal content (Shu et al. 2020a ) that is fake news articles and posts composed of multiple types of data combined together, for example, a fabricated image along with a text related to the image (Shu et al. 2020a ). In this category of fake news forms, we can mention as examples deepfake videos (Yang et al. 2019b ) and GAN-generated fake images (Zhang et al. 2019b ), which are artificial intelligence-based machine-generated fake content that are hard for unsophisticated social network users to identify.

The effects of these forms of fake news content vary on the credibility assessment, as well as sharing intentions which influences the spread of fake news on OSNs. For instance, people with little knowledge about the issue compared to those who are strongly concerned about the key issue of fake news tend to be easier to convince that the misleading or fake news is real, especially when shared via a video modality as compared to the text or the audio modality (Demuyakor and Opata 2022 ).

Intent-based Fake News Category

The most often mentioned and discussed forms of fake news according to researchers in this category include but are not restricted to clickbait , hoax , rumor , satire , propaganda , framing , conspiracy theories and others. In the following subsections, we explain these types of fake news as they were defined in the literature and undertake a brief comparison between them as depicted in Table 5 . The following are the most cited forms of intent-based types of fake news, and their comparison is based on what we suspect are the most common criteria mentioned by researchers.

A comparison between the different types of intent-based fake news

Clickbait refers to misleading headlines and thumbnails of content on the web (Zannettou et al. 2019 ) that tend to be fake stories with catchy headlines aimed at enticing the reader to click on a link (Collins et al. 2020 ). This type of fake news is considered to be the least severe type of false information because if a user reads/views the whole content, it is possible to distinguish if the headline and/or the thumbnail was misleading (Zannettou et al. 2019 ). However, the goal behind using clickbait is to increase the traffic to a website (Zannettou et al. 2019 ).

A hoax is a false (Zubiaga et al. 2018 ) or inaccurate (Zannettou et al. 2019 ) intentionally fabricated (Collins et al. 2020 ) news story used to masquerade the truth (Zubiaga et al. 2018 ) and is presented as factual (Zannettou et al. 2019 ) to deceive the public or audiences (Collins et al. 2020 ). This category is also known either as half-truth or factoid stories (Zannettou et al. 2019 ). Popular examples of hoaxes are stories that report the false death of celebrities (Zannettou et al. 2019 ) and public figures (Collins et al. 2020 ). Recently, hoaxes about the COVID-19 have been circulating through social media.

The term rumor refers to ambiguous or never confirmed claims (Zannettou et al. 2019 ) that are disseminated with a lack of evidence to support them (Sharma et al. 2019 ). This kind of information is widely propagated on OSNs (Zannettou et al. 2019 ). However, they are not necessarily false and may turn out to be true (Zubiaga et al. 2018 ). Rumors originate from unverified sources but may be true or false or remain unresolved (Zubiaga et al. 2018 ).

Satire refers to stories that contain a lot of irony and humor (Zannettou et al. 2019 ). It presents stories as news that might be factually incorrect, but the intent is not to deceive but rather to call out, ridicule, or to expose behavior that is shameful, corrupt, or otherwise “bad” (Golbeck et al. 2018 ). This is done with a fabricated story or by exaggerating the truth reported in mainstream media in the form of comedy (Collins et al. 2020 ). The intent behind satire seems kind of legitimate and many authors (such as Wardle (Wardle 2017 )) do include satire as a type of fake news as there is no intention to cause harm but it has the potential to mislead or fool people.

Also, Golbeck et al. ( 2018 ) mention that there is a spectrum from fake to satirical news that they found to be exploited by many fake news sites. These sites used disclaimers at the bottom of their webpages to suggest they were “satirical” even when there was nothing satirical about their articles, to protect them from accusations about being fake. The difference with a satirical form of fake news is that the authors or the host present themselves as a comedian or as an entertainer rather than a journalist informing the public (Collins et al. 2020 ). However, most audiences believed the information passed in this satirical form because the comedian usually projects news from mainstream media and frames them to suit their program (Collins et al. 2020 ).

Propaganda refers to news stories created by political entities to mislead people. It is a special instance of fabricated stories that aim to harm the interests of a particular party and, typically, has a political context (Zannettou et al. 2019 ). Propaganda was widely used during both World Wars (Collins et al. 2020 ) and during the Cold War (Zannettou et al. 2019 ). It is a consequential type of false information as it can change the course of human history (e.g., by changing the outcome of an election) (Zannettou et al. 2019 ). States are the main actors of propaganda. Recently, propaganda has been used by politicians and media organizations to support a certain position or view (Collins et al. 2020 ). Online astroturfing can be an example of the tools used for the dissemination of propaganda. It is a covert manipulation of public opinion (Peng et al. 2017 ) that aims to make it seem that many people share the same opinion about something. Astroturfing can affect different domains of interest, based on which online astroturfing can be mainly divided into political astroturfing, corporate astroturfing and astroturfing in e-commerce or online services (Mahbub et al. 2019 ). Propaganda types of fake news can be debunked with manual fact-based detection models such as the use of expert-based fact-checkers (Collins et al. 2020 ).

Framing refers to employing some aspect of reality to make content more visible, while the truth is concealed (Collins et al. 2020 ) to deceive and misguide readers. People will understand certain concepts based on the way they are coined and invented. An example of framing was provided by Collins et al. ( 2020 ): “suppose a leader X says “I will neutralize my opponent” simply meaning he will beat his opponent in a given election. Such a statement will be framed such as “leader X threatens to kill Y” and this framed statement provides a total misrepresentation of the original meaning.

Conspiracy Theories

Conspiracy theories refer to the belief that an event is the result of secret plots generated by powerful conspirators. Conspiracy belief refers to people’s adoption and belief of conspiracy theories, and it is associated with psychological, political and social factors (Douglas et al. 2019 ). Conspiracy theories are widespread in contemporary democracies (Sutton and Douglas 2020 ), and they have major consequences. For instance, lately and during the COVID-19 pandemic, conspiracy theories have been discussed from a public health perspective (Meese et al. 2020 ; Allington et al. 2020 ; Freeman et al. 2020 ).

Comparison Between Most Popular Intent-based Types of Fake News

Following a review of the most popular intent-based types of fake news, we compare them as shown in Table 5 based on the most common criteria mentioned by researchers in their definitions as listed below.

- the intent behind the news, which refers to whether a given news type was mainly created to intentionally deceive people or not (e.g., humor, irony, entertainment, etc.);

- the way that the news propagates through OSN, which determines the nature of the propagation of each type of fake news and this can be either fast or slow propagation;

- the severity of the impact of the news on OSN users, which refers to whether the public has been highly impacted by the given type of fake news; the mentioned impact of each fake news type is mainly the proportion of the negative impact;

- and the goal behind disseminating the news, which can be to gain popularity for a particular entity (e.g., political party), for profit (e.g., lucrative business), or other reasons such as humor and irony in the case of satire, spreading panic or anger, and manipulating the public in the case of hoaxes, made-up stories about a particular person or entity in the case of rumors, and misguiding readers in the case of framing.

However, the comparison provided in Table 5 is deduced from the studied research papers; it is our point of view, which is not based on empirical data.

We suspect that the most dangerous types of fake news are the ones with high intention to deceive the public, fast propagation through social media, high negative impact on OSN users, and complicated hidden goals and agendas. However, while the other types of fake news are less dangerous, they should not be ignored.

Moreover, it is important to highlight that the existence of the overlap in the types of fake news mentioned above has been proven, thus it is possible to observe false information that may fall within multiple categories (Zannettou et al. 2019 ). Here, we provide two examples by Zannettou et al. ( 2019 ) to better understand possible overlaps: (1) a rumor may also use clickbait techniques to increase the audience that will read the story; and (2) propaganda stories, as a special instance of a framing story.

Challenges related to fake news detection and mitigation

To alleviate fake news and its threats, it is crucial to first identify and understand the factors involved that continue to challenge researchers. Thus, the main question is to explore and investigate the factors that make it easier to fall for manipulated information. Despite the tremendous progress made in alleviating some of the challenges in fake news detection (Sharma et al. 2019 ; Zhou and Zafarani 2020 ; Zhang and Ghorbani 2020 ; Shu et al. 2020a ), much more work needs to be accomplished to address the problem effectively.

In this section, we discuss several open issues that have been making fake news detection in social media a challenging problem. These issues can be summarized as follows: content-based issues (i.e., deceptive content that resembles the truth very closely), contextual issues (i.e., lack of user awareness, social bots spreaders of fake content, and OSN’s dynamic natures that leads to the fast propagation), as well as the issue of existing datasets (i.e., there still no one size fits all benchmark dataset for fake news detection). These various aspects have proven (Shu et al. 2017 ) to have a great impact on the accuracy of fake news detection approaches.

Content-based issue, deceptive content

Automatic fake news detection remains a huge challenge, primarily because the content is designed in a way that it closely resembles the truth. Besides, most deceivers choose their words carefully and use their language strategically to avoid being caught. Therefore, it is often hard to determine its veracity by AI without the reliance on additional information from third parties such as fact-checkers.

Abdullah-All-Tanvir et al. ( 2020 ) reported that fake news tends to have more complicated stories and hardly ever make any references. It is more likely to contain a greater number of words that express negative emotions. This makes it so complicated that it becomes impossible for a human to manually detect the credibility of this content. Therefore, detecting fake news on social media is quite challenging. Moreover, fake news appears in multiple types and forms, which makes it hard and challenging to define a single global solution able to capture and deal with the disseminated content. Consequently, detecting false information is not a straightforward task due to its various types and forms Zannettou et al. ( 2019 ).

Contextual issues

Contextual issues are challenges that we suspect may not be related to the content of the news but rather they are inferred from the context of the online news post (i.e., humans are the weakest factor due to lack of user awareness, social bots spreaders, dynamic nature of online social platforms and fast propagation of fake news).

Humans are the weakest factor due to the lack of awareness

Recent statistics 31 show that the percentage of unintentional fake news spreaders (people who share fake news without the intention to mislead) over social media is five times higher than intentional spreaders. Moreover, another recent statistic 32 shows that the percentage of people who were confident about their ability to discern fact from fiction is ten times higher than those who were not confident about the truthfulness of what they are sharing. As a result, we can deduce the lack of human awareness about the ascent of fake news.

Public susceptibility and lack of user awareness (Sharma et al. 2019 ) have always been the most challenging problem when dealing with fake news and misinformation. This is a complex issue because many people believe almost everything on the Internet and the ones who are new to digital technology or have less expertise may be easily fooled (Edgerly et al. 2020 ).

Moreover, it has been widely proven (Metzger et al. 2020 ; Edgerly et al. 2020 ) that people are often motivated to support and accept information that goes with their preexisting viewpoints and beliefs, and reject information that does not fit in as well. Hence, Shu et al. ( 2017 ) illustrate an interesting correlation between fake news spread and psychological and cognitive theories. They further suggest that humans are more likely to believe information that confirms their existing views and ideological beliefs. Consequently, they deduce that humans are naturally not very good at differentiating real information from fake information.

Recent research by Giachanou et al. ( 2020 ) studies the role of personality and linguistic patterns in discriminating between fake news spreaders and fact-checkers. They classify a user as a potential fact-checker or a potential fake news spreader based on features that represent users’ personality traits and linguistic patterns used in their tweets. They show that leveraging personality traits and linguistic patterns can improve the performance in differentiating between checkers and spreaders.

Furthermore, several researchers studied the prevalence of fake news on social networks during (Allcott and Gentzkow 2017 ; Grinberg et al. 2019 ; Guess et al. 2019 ; Baptista and Gradim 2020 ) and after (Garrett and Bond 2021 ) the 2016 US presidential election and found that individuals most likely to engage with fake news sources were generally conservative-leaning, older, and highly engaged with political news.

Metzger et al. ( 2020 ) examine how individuals evaluate the credibility of biased news sources and stories. They investigate the role of both cognitive dissonance and credibility perceptions in selective exposure to attitude-consistent news information. They found that online news consumers tend to perceive attitude-consistent news stories as more accurate and more credible than attitude-inconsistent stories.

Similarly, Edgerly et al. ( 2020 ) explore the impact of news headlines on the audience’s intent to verify whether given news is true or false. They concluded that participants exhibit higher intent to verify the news only when they believe the headline to be true, which is predicted by perceived congruence with preexisting ideological tendencies.

Luo et al. ( 2022 ) evaluate the effects of endorsement cues in social media on message credibility and detection accuracy. Results showed that headlines associated with a high number of likes increased credibility, thereby enhancing detection accuracy for real news but undermining accuracy for fake news. Consequently, they highlight the urgency of empowering individuals to assess both news veracity and endorsement cues appropriately on social media.

Moreover, misinformed people are a greater problem than uninformed people (Kuklinski et al. 2000 ), because the former hold inaccurate opinions (which may concern politics, climate change, medicine) that are harder to correct. Indeed, people find it difficult to update their misinformation-based beliefs even after they have been proved to be false (Flynn et al. 2017 ). Moreover, even if a person has accepted the corrected information, his/her belief may still affect their opinion (Nyhan and Reifler 2015 ).

Falling for disinformation may also be explained by a lack of critical thinking and of the need for evidence that supports information (Vilmer et al. 2018 ; Badawy et al. 2019 ). However, it is also possible that people choose misinformation because they engage in directionally motivated reasoning (Badawy et al. 2019 ; Flynn et al. 2017 ). Online clients are normally vulnerable and will, in general, perceive web-based networking media as reliable, as reported by Abdullah-All-Tanvir et al. ( 2019 ), who propose to mechanize fake news recognition.

It is worth noting that in addition to bots causing the outpouring of the majority of the misrepresentations, specific individuals are also contributing a large share of this issue (Abdullah-All-Tanvir et al. 2019 ). Furthermore, Vosoughi et al. (Vosoughi et al. 2018 ) found that contrary to conventional wisdom, robots have accelerated the spread of real and fake news at the same rate, implying that fake news spreads more than the truth because humans, not robots, are more likely to spread it.

In this case, verified users and those with numerous followers were not necessarily responsible for spreading misinformation of the corrupted posts (Abdullah-All-Tanvir et al. 2019 ).

Viral fake news can cause much havoc to our society. Therefore, to mitigate the negative impact of fake news, it is important to analyze the factors that lead people to fall for misinformation and to further understand why people spread fake news (Cheng et al. 2020 ). Measuring the accuracy, credibility, veracity and validity of news contents can also be a key countermeasure to consider.

Social bots spreaders

Several authors (Shu et al. 2018b , 2017 ; Shi et al. 2019 ; Bessi and Ferrara 2016 ; Shao et al. 2018a ) have also shown that fake news is likely to be created and spread by non-human accounts with similar attributes and structure in the network, such as social bots (Ferrara et al. 2016 ). Bots (short for software robots) exist since the early days of computers. A social bot is a computer algorithm that automatically produces content and interacts with humans on social media, trying to emulate and possibly alter their behavior (Ferrara et al. 2016 ). Although they are designed to provide a useful service, they can be harmful, for example when they contribute to the spread of unverified information or rumors (Ferrara et al. 2016 ). However, it is important to note that bots are simply tools created and maintained by humans for some specific hidden agendas.

Social bots tend to connect with legitimate users instead of other bots. They try to act like a human with fewer words and fewer followers on social media. This contributes to the forwarding of fake news (Jiang et al. 2019 ). Moreover, there is a difference between bot-generated and human-written clickbait (Le et al. 2019 ).

Many researchers have addressed ways of identifying and analyzing possible sources of fake news spread in social media. Recent research by Shu et al. ( 2020a ) describes social bots use of two strategies to spread low-credibility content. First, they amplify interactions with content as soon as it is created to make it look legitimate and to facilitate its spread across social networks. Next, they try to increase public exposure to the created content and thus boost its perceived credibility by targeting influential users that are more likely to believe disinformation in the hope of getting them to “repost” the fabricated content. They further discuss the social bot detection systems taxonomy proposed by Ferrara et al. ( 2016 ) which divides bot detection methods into three classes: (1) graph-based, (2) crowdsourcing and (3) feature-based social bot detection methods.

Similarly, Shao et al. ( 2018a ) examine social bots and how they promote the spread of misinformation through millions of Twitter posts during and following the 2016 US presidential campaign. They found that social bots played a disproportionate role in spreading articles from low-credibility sources by amplifying such content in the early spreading moments and targeting users with many followers through replies and mentions to expose them to this content and induce them to share it.

Ismailov et al. ( 2020 ) assert that the techniques used to detect bots depend on the social platform and the objective. They note that a malicious bot designed to make friends with as many accounts as possible will require a different detection approach than a bot designed to repeatedly post links to malicious websites. Therefore, they identify two models for detecting malicious accounts, each using a different set of features. Social context models achieve detection by examining features related to an account’s social presence including features such as relationships to other accounts, similarities to other users’ behaviors, and a variety of graph-based features. User behavior models primarily focus on features related to an individual user’s behavior, such as frequency of activities (e.g., number of tweets or posts per time interval), patterns of activity and clickstream sequences.

Therefore, it is crucial to consider bot detection techniques to distinguish bots from normal users to better leverage user profile features to detect fake news.

However, there is also another “bot-like” strategy that aims to massively promote disinformation and fake content in social platforms, which is called bot farms or also troll farms. It is not social bots, but it is a group of organized individuals engaging in trolling or bot-like promotion of narratives in a coordinated fashion (Wardle 2018 ) hired to massively spread fake news or any other harmful content. A prominent troll farm example is the Russia-based Internet Research Agency (IRA), which disseminated inflammatory content online to influence the outcome of the 2016 U.S. presidential election. 33 As a result, Twitter suspended accounts connected to the IRA and deleted 200,000 tweets from Russian trolls (Jamieson 2020 ). Another example to mention in this category is review bombing (Moro and Birt 2022 ). Review bombing refers to coordinated groups of people massively performing the same negative actions online (e.g., dislike, negative review/comment) on an online video, game, post, product, etc., in order to reduce its aggregate review score. The review bombers can be both humans and bots coordinated in order to cause harm and mislead people by falsifying facts.

Dynamic nature of online social platforms and fast propagation of fake news

Sharma et al. ( 2019 ) affirm that the fast proliferation of fake news through social networks makes it hard and challenging to assess the information’s credibility on social media. Similarly, Qian et al. ( 2018 ) assert that fake news and fabricated content propagate exponentially at the early stage of its creation and can cause a significant loss in a short amount of time (Friggeri et al. 2014 ) including manipulating the outcome of political events (Liu and Wu 2018 ; Bessi and Ferrara 2016 ).

Moreover, while analyzing the way source and promoters of fake news operate over the web through multiple online platforms, Zannettou et al. ( 2019 ) discovered that false information is more likely to spread across platforms (18% appearing on multiple platforms) compared to real information (11%).

Furthermore, recently, Shu et al. ( 2020c ) attempted to understand the propagation of disinformation and fake news in social media and found that such content is produced and disseminated faster and easier through social media because of the low barriers that prevent doing so. Similarly, Shu et al. ( 2020b ) studied hierarchical propagation networks for fake news detection. They performed a comparative analysis between fake and real news from structural, temporal and linguistic perspectives. They demonstrated the potential of using these features to detect fake news and they showed their effectiveness for fake news detection as well.

Lastly, Abdullah-All-Tanvir et al. ( 2020 ) note that it is almost impossible to manually detect the sources and authenticity of fake news effectively and efficiently, due to its fast circulation in such a small amount of time. Therefore, it is crucial to note that the dynamic nature of the various online social platforms, which results in the continued rapid and exponential propagation of such fake content, remains a major challenge that requires further investigation while defining innovative solutions for fake news detection.

Datasets issue

The existing approaches lack an inclusive dataset with derived multidimensional information to detect fake news characteristics to achieve higher accuracy of machine learning classification model performance (Nyow and Chua 2019 ). These datasets are primarily dedicated to validating the machine learning model and are the ultimate frame of reference to train the model and analyze its performance. Therefore, if a researcher evaluates their model based on an unrepresentative dataset, the validity and the efficiency of the model become questionable when it comes to applying the fake news detection approach in a real-world scenario.

Moreover, several researchers (Shu et al. 2020d ; Wang et al. 2020 ; Pathak and Srihari 2019 ; Przybyla 2020 ) believe that fake news is diverse and dynamic in terms of content, topics, publishing methods and media platforms, and sophisticated linguistic styles geared to emulate true news. Consequently, training machine learning models on such sophisticated content requires large-scale annotated fake news data that are difficult to obtain (Shu et al. 2020d ).

Therefore, datasets are also a great topic to work on to enhance data quality and have better results while defining our solutions. Adversarial learning techniques (e.g., GAN, SeqGAN) can be used to provide machine-generated data that can be used to train deeper models and build robust systems to detect fake examples from the real ones. This approach can be used to counter the lack of datasets and the scarcity of data available to train models.

Fake news detection literature review

Fake news detection in social networks is still in the early stage of development and there are still challenging issues that need further investigation. This has become an emerging research area that is attracting huge attention.

There are various research studies on fake news detection in online social networks. Few of them have focused on the automatic detection of fake news using artificial intelligence techniques. In this section, we review the existing approaches used in automatic fake news detection, as well as the techniques that have been adopted. Then, a critical discussion built on a primary classification scheme based on a specific set of criteria is also emphasized.

Categories of fake news detection

In this section, we give an overview of most of the existing automatic fake news detection solutions adopted in the literature. A recent classification by Sharma et al. ( 2019 ) uses three categories of fake news identification methods. Each category is further divided based on the type of existing methods (i.e., content-based, feedback-based and intervention-based methods). However, a review of the literature for fake news detection in online social networks shows that the existing studies can be classified into broader categories based on two major aspects that most authors inspect and make use of to define an adequate solution. These aspects can be considered as major sources of extracted information used for fake news detection and can be summarized as follows: the content-based (i.e., related to the content of the news post) and the contextual aspect (i.e., related to the context of the news post).

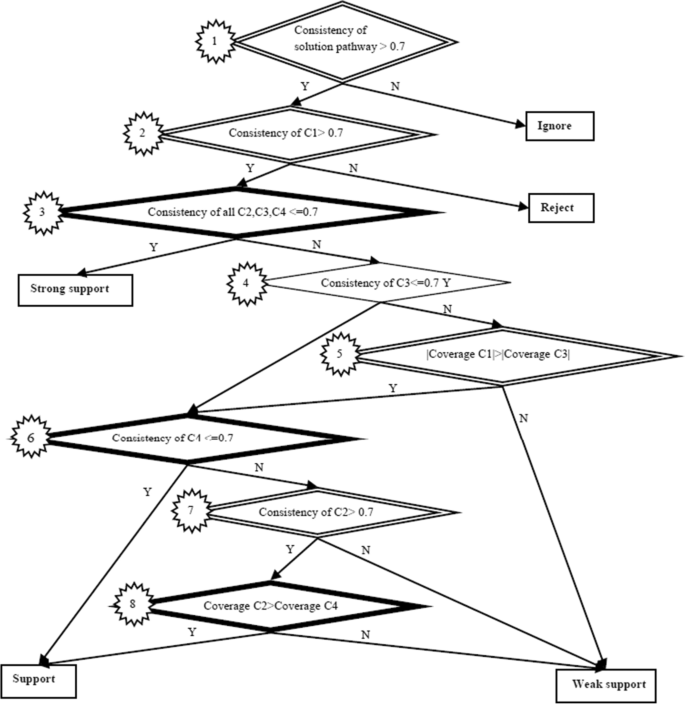

Consequently, the studies we reviewed can be classified into three different categories based on the two aspects mentioned above (the third category is hybrid). As depicted in Fig. 5 , fake news detection solutions can be categorized as news content-based approaches, the social context-based approaches that can be divided into network and user-based approaches, and hybrid approaches. The latter combines both content-based and contextual approaches to define the solution.

Classification of fake news detection approaches

News Content-based Category

News content-based approaches are fake news detection approaches that use content information (i.e., information extracted from the content of the news post) and that focus on studying and exploiting the news content in their proposed solutions. Content refers to the body of the news, including source, headline, text and image-video, which can reflect subtle differences.

Researchers of this category rely on content-based detection cues (i.e., text and multimedia-based cues), which are features extracted from the content of the news post. Text-based cues are features extracted from the text of the news, whereas multimedia-based cues are features extracted from the images and videos attached to the news. Figure 6 summarizes the most widely used news content representation (i.e., text and multimedia/images) and detection techniques (i.e., machine learning (ML), deep Learning (DL), natural language processing (NLP), fact-checking, crowdsourcing (CDS) and blockchain (BKC)) in news content-based category of fake news detection approaches. Most of the reviewed research works based on news content for fake news detection rely on the text-based cues (Kapusta et al. 2019 ; Kaur et al. 2020 ; Vereshchaka et al. 2020 ; Ozbay and Alatas 2020 ; Wang 2017 ; Nyow and Chua 2019 ; Hosseinimotlagh and Papalexakis 2018 ; Abdullah-All-Tanvir et al. 2019 , 2020 ; Mahabub 2020 ; Bahad et al. 2019 ; Hiriyannaiah et al. 2020 ) extracted from the text of the news content including the body of the news and its headline. However, a few researchers such as Vishwakarma et al. ( 2019 ) and Amri et al. ( 2022 ) try to recognize text from the associated image.

News content-based category: news content representation and detection techniques

Most researchers of this category rely on artificial intelligence (AI) techniques (such as ML, DL and NLP models) to improve performance in terms of prediction accuracy. Others use different techniques such as fact-checking, crowdsourcing and blockchain. Specifically, the AI- and ML-based approaches in this category are trying to extract features from the news content, which they use later for content analysis and training tasks. In this particular case, the extracted features are the different types of information considered to be relevant for the analysis. Feature extraction is considered as one of the best techniques to reduce data size in automatic fake news detection. This technique aims to choose a subset of features from the original set to improve classification performance (Yazdi et al. 2020 ).

Table 6 lists the distinct features and metadata, as well as the used datasets in the news content-based category of fake news detection approaches.

The features and datasets used in the news content-based approaches

a https://www.kaggle.com/anthonyc1/gathering-real-news-for-oct-dec-2016 , last access date: 26-12-2022

b https://mediabiasfactcheck.com/ , last access date: 26-12-2022

c https://github.com/KaiDMML/FakeNewsNet , last access date: 26-12-2022

d https://www.kaggle.com/anthonyc1/gathering-real-news-for-oct-dec-2016 , last access date: 26-12-2022

e https://www.cs.ucsb.edu/~william/data/liar_dataset.zip , last access date: 26-12-2022

f https://www.kaggle.com/mrisdal/fake-news , last access date: 26-12-2022

g https://github.com/BuzzFeedNews/2016-10-facebook-fact-check , last access date: 26-12-2022

h https://www.politifact.com/subjects/fake-news/ , last access date: 26-12-2022

i https://www.kaggle.com/rchitic17/real-or-fake , last access date: 26-12-2022

j https://www.kaggle.com/jruvika/fake-news-detection , last access date: 26-12-2022

k https://github.com/MKLab-ITI/image-verification-corpus , last access date: 26-12-2022

l https://drive.google.com/file/d/14VQ7EWPiFeGzxp3XC2DeEHi-BEisDINn/view , last access date: 26-12-2022

Social Context-based Category

Unlike news content-based solutions, the social context-based approaches capture the skeptical social context of the online news (Zhang and Ghorbani 2020 ) rather than focusing on the news content. The social context-based category contains fake news detection approaches that use the contextual aspects (i.e., information related to the context of the news post). These aspects are based on social context and they offer additional information to help detect fake news. They are the surrounding data outside of the fake news article itself, where they can be an essential part of automatic fake news detection. Some useful examples of contextual information may include checking if the news itself and the source that published it are credible, checking the date of the news or the supporting resources, and checking if any other online news platforms are reporting the same or similar stories (Zhang and Ghorbani 2020 ).

Social context-based aspects can be classified into two subcategories, user-based and network-based, and they can be used for context analysis and training tasks in the case of AI- and ML-based approaches. User-based aspects refer to information captured from OSN users such as user profile information (Shu et al. 2019b ; Wang et al. 2019c ; Hamdi et al. 2020 ; Nyow and Chua 2019 ; Jiang et al. 2019 ) and user behavior (Cardaioli et al. 2020 ) such as user engagement (Uppada et al. 2022 ; Jiang et al. 2019 ; Shu et al. 2018b ; Nyow and Chua 2019 ) and response (Zhang et al. 2019a ; Qian et al. 2018 ). Meanwhile, network-based aspects refer to information captured from the properties of the social network where the fake content is shared and disseminated such as news propagation path (Liu and Wu 2018 ; Wu and Liu 2018 ) (e.g., propagation times and temporal characteristics of propagation), diffusion patterns (Shu et al. 2019a ) (e.g., number of retweets, shares), as well as user relationships (Mishra 2020 ; Hamdi et al. 2020 ; Jiang et al. 2019 ) (e.g., friendship status among users).

Figure 7 summarizes some of the most widely adopted social context representations, as well as the most used detection techniques (i.e., AI, ML, DL, fact-checking and blockchain), in the social context-based category of approaches.

Social context-based category: social context representation and detection techniques

Table 7 lists the distinct features and metadata, the adopted detection cues, as well as the used datasets, in the context-based category of fake news detection approaches.

The features, detection cues and datasets used int the social context-based approaches

a https://www.dropbox.com/s/7ewzdrbelpmrnxu/rumdetect2017.zip , last access date: 26-12-2022 b https://snap.stanford.edu/data/ego-Twitter.html , last access date: 26-12-2022

Hybrid approaches

Most researchers are focusing on employing a specific method rather than a combination of both content- and context-based methods. This is because some of them (Wu and Rao 2020 ) believe that there still some challenging limitations in the traditional fusion strategies due to existing feature correlations and semantic conflicts. For this reason, some researchers focus on extracting content-based information, while others are capturing some social context-based information for their proposed approaches.

However, it has proven challenging to successfully automate fake news detection based on just a single type of feature (Ruchansky et al. 2017 ). Therefore, recent directions tend to do a mixture by using both news content-based and social context-based approaches for fake news detection.

Table 8 lists the distinct features and metadata, as well as the used datasets, in the hybrid category of fake news detection approaches.

The features and datasets used in the hybrid approaches

Fake news detection techniques

Another vision for classifying automatic fake news detection is to look at techniques used in the literature. Hence, we classify the detection methods based on the techniques into three groups:

- Human-based techniques: This category mainly includes the use of crowdsourcing and fact-checking techniques, which rely on human knowledge to check and validate the veracity of news content.

- Artificial Intelligence-based techniques: This category includes the most used AI approaches for fake news detection in the literature. Specifically, these are the approaches in which researchers use classical ML, deep learning techniques such as convolutional neural network (CNN), recurrent neural network (RNN), as well as natural language processing (NLP).

- Blockchain-based techniques: This category includes solutions using blockchain technology to detect and mitigate fake news in social media by checking source reliability and establishing the traceability of the news content.

Human-based Techniques

One specific research direction for fake news detection consists of using human-based techniques such as crowdsourcing (Pennycook and Rand 2019 ; Micallef et al. 2020 ) and fact-checking (Vlachos and Riedel 2014 ; Chung and Kim 2021 ; Nyhan et al. 2020 ) techniques.

These approaches can be considered as low computational requirement techniques since both rely on human knowledge and expertise for fake news detection. However, fake news identification cannot be addressed solely through human force since it demands a lot of effort in terms of time and cost, and it is ineffective in terms of preventing the fast spread of fake content.

Crowdsourcing. Crowdsourcing approaches (Kim et al. 2018 ) are based on the “wisdom of the crowds” (Collins et al. 2020 ) for fake content detection. These approaches rely on the collective contributions and crowd signals (Tschiatschek et al. 2018 ) of a group of people for the aggregation of crowd intelligence to detect fake news (Tchakounté et al. 2020 ) and to reduce the spread of misinformation on social media (Pennycook and Rand 2019 ; Micallef et al. 2020 ).

Micallef et al. ( 2020 ) highlight the role of the crowd in countering misinformation. They suspect that concerned citizens (i.e., the crowd), who use platforms where disinformation appears, can play a crucial role in spreading fact-checking information and in combating the spread of misinformation.

Recently Tchakounté et al. ( 2020 ) proposed a voting system as a new method of binary aggregation of opinions of the crowd and the knowledge of a third-party expert. The aggregator is based on majority voting on the crowd side and weighted averaging on the third-party site.

Similarly, Huffaker et al. ( 2020 ) propose a crowdsourced detection of emotionally manipulative language. They introduce an approach that transforms classification problems into a comparison task to mitigate conflation content by allowing the crowd to detect text that uses manipulative emotional language to sway users toward positions or actions. The proposed system leverages anchor comparison to distinguish between intrinsically emotional content and emotionally manipulative language.

La Barbera et al. ( 2020 ) try to understand how people perceive the truthfulness of information presented to them. They collect data from US-based crowd workers, build a dataset of crowdsourced truthfulness judgments for political statements, and compare it with expert annotation data generated by fact-checkers such as PolitiFact.

Coscia and Rossi ( 2020 ) introduce a crowdsourced flagging system that consists of online news flagging. The bipolar model of news flagging attempts to capture the main ingredients that they observe in empirical research on fake news and disinformation.

Unlike the previously mentioned researchers who focus on news content in their approaches, Pennycook and Rand ( 2019 ) focus on using crowdsourced judgments of the quality of news sources to combat social media disinformation.

Fact-Checking. The fact-checking task is commonly manually performed by journalists to verify the truthfulness of a given claim. Indeed, fact-checking features are being adopted by multiple online social network platforms. For instance, Facebook 34 started addressing false information through independent fact-checkers in 2017, followed by Google 35 the same year. Two years later, Instagram 36 followed suit. However, the usefulness of fact-checking initiatives is questioned by journalists 37 , as well as by researchers such as Andersen and Søe ( 2020 ). On the other hand, work is being conducted to boost the effectiveness of these initiatives to reduce misinformation (Chung and Kim 2021 ; Clayton et al. 2020 ; Nyhan et al. 2020 ).

Most researchers use fact-checking websites (e.g., politifact.com, 38 snopes.com, 39 Reuters, 40 , etc.) as data sources to build their datasets and train their models. Therefore, in the following, we specifically review examples of solutions that use fact-checking (Vlachos and Riedel 2014 ) to help build datasets that can be further used in the automatic detection of fake content.

Yang et al. ( 2019a ) use PolitiFact fact-checking website as a data source to train, tune, and evaluate their model named XFake, on political data. The XFake system is an explainable fake news detector that assists end users to identify news credibility. The fakeness of news items is detected and interpreted considering both content and contextual (e.g., statements) information (e.g., speaker).

Based on the idea that fact-checkers cannot clean all data, and it must be a selection of what “matters the most” to clean while checking a claim, Sintos et al. ( 2019 ) propose a solution to help fact-checkers combat problems related to data quality (where inaccurate data lead to incorrect conclusions) and data phishing. The proposed solution is a combination of data cleaning and perturbation analysis to avoid uncertainties and errors in data and the possibility that data can be phished.

Tchechmedjiev et al. ( 2019 ) propose a system named “ClaimsKG” as a knowledge graph of fact-checked claims aiming to facilitate structured queries about their truth values, authors, dates, journalistic reviews and other kinds of metadata. “ClaimsKG” designs the relationship between vocabularies. To gather vocabularies, a semi-automated pipeline periodically gathers data from popular fact-checking websites regularly.

AI-based Techniques

Previous work by Yaqub et al. ( 2020 ) has shown that people lack trust in automated solutions for fake news detection However, work is already being undertaken to increase this trust, for instance by von der Weth et al. ( 2020 ).

Most researchers consider fake news detection as a classification problem and use artificial intelligence techniques, as shown in Fig. 8 . The adopted AI techniques may include machine learning ML (e.g., Naïve Bayes, logistic regression, support vector machine SVM), deep learning DL (e.g., convolutional neural networks CNN, recurrent neural networks RNN, long short-term memory LSTM) and natural language processing NLP (e.g., Count vectorizer, TF-IDF Vectorizer). Most of them combine many AI techniques in their solutions rather than relying on one specific approach.

Examples of the most widely used AI techniques for fake news detection

Many researchers are developing machine learning models in their solutions for fake news detection. Recently, deep neural network techniques are also being employed as they are generating promising results (Islam et al. 2020 ). A neural network is a massively parallel distributed processor with simple units that can store important information and make it available for use (Hiriyannaiah et al. 2020 ). Moreover, it has been proven (Cardoso Durier da Silva et al. 2019 ) that the most widely used method for automatic detection of fake news is not simply a classical machine learning technique, but rather a fusion of classical techniques coordinated by a neural network.

Some researchers define purely machine learning models (Del Vicario et al. 2019 ; Elhadad et al. 2019 ; Aswani et al. 2017 ; Hakak et al. 2021 ; Singh et al. 2021 ) in their fake news detection approaches. The more commonly used machine learning algorithms (Abdullah-All-Tanvir et al. 2019 ) for classification problems are Naïve Bayes, logistic regression and SVM.

Other researchers (Wang et al. 2019c ; Wang 2017 ; Liu and Wu 2018 ; Mishra 2020 ; Qian et al. 2018 ; Zhang et al. 2020 ; Goldani et al. 2021 ) prefer to do a mixture of different deep learning models, without combining them with classical machine learning techniques. Some even prove that deep learning techniques outperform traditional machine learning techniques (Mishra et al. 2022 ). Deep learning is one of the most widely popular research topics in machine learning. Unlike traditional machine learning approaches, which are based on manually crafted features, deep learning approaches can learn hidden representations from simpler inputs both in context and content variations (Bondielli and Marcelloni 2019 ). Moreover, traditional machine learning algorithms almost always require structured data and are designed to “learn” to act by understanding labeled data and then use it to produce new results with more datasets, which requires human intervention to “teach them” when the result is incorrect (Parrish 2018 ), while deep learning networks rely on layers of artificial neural networks (ANN) and do not require human intervention, as multilevel layers in neural networks place data in a hierarchy of different concepts, which ultimately learn from their own mistakes (Parrish 2018 ). The two most widely implemented paradigms in deep neural networks are recurrent neural networks (RNN) and convolutional neural networks (CNN).

Still other researchers (Abdullah-All-Tanvir et al. 2019 ; Kaliyar et al. 2020 ; Zhang et al. 2019a ; Deepak and Chitturi 2020 ; Shu et al. 2018a ; Wang et al. 2019c ) prefer to combine traditional machine learning and deep learning classification, models. Others combine machine learning and natural language processing techniques. A few combine deep learning models with natural language processing (Vereshchaka et al. 2020 ). Some other researchers (Kapusta et al. 2019 ; Ozbay and Alatas 2020 ; Ahmed et al. 2020 ) combine natural language processing with machine learning models. Furthermore, others (Abdullah-All-Tanvir et al. 2019 ; Kaur et al. 2020 ; Kaliyar 2018 ; Abdullah-All-Tanvir et al. 2020 ; Bahad et al. 2019 ) prefer to combine all the previously mentioned techniques (i.e., ML, DL and NLP) in their approaches.

Table 11 , which is relegated to the Appendix (after the bibliography) because of its size, shows a comparison of the fake news detection solutions that we have reviewed based on their main approaches, the methodology that was used and the models.

Comparison of AI-based fake news detection techniques

Blockchain-based Techniques for Source Reliability and Traceability

Another research direction for detecting and mitigating fake news in social media focuses on using blockchain solutions. Blockchain technology is recently attracting researchers’ attention due to the interesting features it offers. Immutability, decentralization, tamperproof, consensus, record keeping and non-repudiation of transactions are some of the key features that make blockchain technology exploitable, not just for cryptocurrencies, but also to prove the authenticity and integrity of digital assets.