How To Write a Critical Appraisal

A critical appraisal is an academic approach that refers to the systematic identification of strengths and weakness of a research article with the intent of evaluating the usefulness and validity of the work’s research findings. As with all essays, you need to be clear, concise, and logical in your presentation of arguments, analysis, and evaluation. However, in a critical appraisal there are some specific sections which need to be considered which will form the main basis of your work.

Structure of a Critical Appraisal

Introduction.

Your introduction should introduce the work to be appraised, and how you intend to proceed. In other words, you set out how you will be assessing the article and the criteria you will use. Focusing your introduction on these areas will ensure that your readers understand your purpose and are interested to read on. It needs to be clear that you are undertaking a scientific and literary dissection and examination of the indicated work to assess its validity and credibility, expressed in an interesting and motivational way.

Body of the Work

The body of the work should be separated into clear paragraphs that cover each section of the work and sub-sections for each point that is being covered. In all paragraphs your perspectives should be backed up with hard evidence from credible sources (fully cited and referenced at the end), and not be expressed as an opinion or your own personal point of view. Remember this is a critical appraisal and not a presentation of negative parts of the work.

When appraising the introduction of the article, you should ask yourself whether the article answers the main question it poses. Alongside this look at the date of publication, generally you want works to be within the past 5 years, unless they are seminal works which have strongly influenced subsequent developments in the field. Identify whether the journal in which the article was published is peer reviewed and importantly whether a hypothesis has been presented. Be objective, concise, and coherent in your presentation of this information.

Once you have appraised the introduction you can move onto the methods (or the body of the text if the work is not of a scientific or experimental nature). To effectively appraise the methods, you need to examine whether the approaches used to draw conclusions (i.e., the methodology) is appropriate for the research question, or overall topic. If not, indicate why not, in your appraisal, with evidence to back up your reasoning. Examine the sample population (if there is one), or the data gathered and evaluate whether it is appropriate, sufficient, and viable, before considering the data collection methods and survey instruments used. Are they fit for purpose? Do they meet the needs of the paper? Again, your arguments should be backed up by strong, viable sources that have credible foundations and origins.

One of the most significant areas of appraisal is the results and conclusions presented by the authors of the work. In the case of the results, you need to identify whether there are facts and figures presented to confirm findings, assess whether any statistical tests used are viable, reliable, and appropriate to the work conducted. In addition, whether they have been clearly explained and introduced during the work. In regard to the results presented by the authors you need to present evidence that they have been unbiased and objective, and if not, present evidence of how they have been biased. In this section you should also dissect the results and identify whether any statistical significance reported is accurate and whether the results presented and discussed align with any tables or figures presented.

The final element of the body text is the appraisal of the discussion and conclusion sections. In this case there is a need to identify whether the authors have drawn realistic conclusions from their available data, whether they have identified any clear limitations to their work and whether the conclusions they have drawn are the same as those you would have done had you been presented with the findings.

The conclusion of the appraisal should not introduce any new information but should be a concise summing up of the key points identified in the body text. The conclusion should be a condensation (or precis) of all that you have already written. The aim is bringing together the whole paper and state an opinion (based on evaluated evidence) of how valid and reliable the paper being appraised can be considered to be in the subject area. In all cases, you should reference and cite all sources used. To help you achieve a first class critical appraisal we have put together some key phrases that can help lift you work above that of others.

Key Phrases for a Critical Appraisal

- Whilst the title might suggest

- The focus of the work appears to be…

- The author challenges the notion that…

- The author makes the claim that…

- The article makes a strong contribution through…

- The approach provides the opportunity to…

- The authors consider…

- The argument is not entirely convincing because…

- However, whilst it can be agreed that… it should also be noted that…

- Several crucial questions are left unanswered…

- It would have been more appropriate to have stated that…

- This framework extends and increases…

- The authors correctly conclude that…

- The authors efforts can be considered as…

- Less convincing is the generalisation that…

- This appears to mislead readers indicating that…

- This research proves to be timely and particularly significant in the light of…

You may also like

33 Critical Analysis Examples

Critical analysis refers to the ability to examine something in detail in preparation to make an evaluation or judgment.

It will involve exploring underlying assumptions, theories, arguments, evidence, logic, biases, contextual factors, and so forth, that could help shed more light on the topic.

In essay writing, a critical analysis essay will involve using a range of analytical skills to explore a topic, such as:

- Evaluating sources

- Exploring strengths and weaknesses

- Exploring pros and cons

- Questioning and challenging ideas

- Comparing and contrasting ideas

If you’re writing an essay, you could also watch my guide on how to write a critical analysis essay below, and don’t forget to grab your worksheets and critical analysis essay plan to save yourself a ton of time:

Grab your Critical Analysis Worksheets and Essay Plan Here

Critical Analysis Examples

1. exploring strengths and weaknesses.

Perhaps the first and most straightforward method of critical analysis is to create a simple strengths-vs-weaknesses comparison.

Most things have both strengths and weaknesses – you could even do this for yourself! What are your strengths? Maybe you’re kind or good at sports or good with children. What are your weaknesses? Maybe you struggle with essay writing or concentration.

If you can analyze your own strengths and weaknesses, then you understand the concept. What might be the strengths and weaknesses of the idea you’re hoping to critically analyze?

Strengths and weaknesses could include:

- Does it seem highly ethical (strength) or could it be more ethical (weakness)?

- Is it clearly explained (strength) or complex and lacking logical structure (weakness)?

- Does it seem balanced (strength) or biased (weakness)?

You may consider using a SWOT analysis for this step. I’ve provided a SWOT analysis guide here .

2. Evaluating Sources

Evaluation of sources refers to looking at whether a source is reliable or unreliable.

This is a fundamental media literacy skill .

Steps involved in evaluating sources include asking questions like:

- Who is the author and are they trustworthy?

- Is this written by an expert?

- Is this sufficiently reviewed by an expert?

- Is this published in a trustworthy publication?

- Are the arguments sound or common sense?

For more on this topic, I’d recommend my detailed guide on digital literacy .

3. Identifying Similarities

Identifying similarities encompasses the act of drawing parallels between elements, concepts, or issues.

In critical analysis, it’s common to compare a given article, idea, or theory to another one. In this way, you can identify areas in which they are alike.

Determining similarities can be a challenge, but it’s an intellectual exercise that fosters a greater understanding of the aspects you’re studying. This step often calls for a careful reading and note-taking to highlight matching information, points of view, arguments or even suggested solutions.

Similarities might be found in:

- The key themes or topics discussed

- The theories or principles used

- The demographic the work is written for or about

- The solutions or recommendations proposed

Remember, the intention of identifying similarities is not to prove one right or wrong. Rather, it sets the foundation for understanding the larger context of your analysis, anchoring your arguments in a broader spectrum of ideas.

Your critical analysis strengthens when you can see the patterns and connections across different works or topics. It fosters a more comprehensive, insightful perspective. And importantly, it is a stepping stone in your analysis journey towards evaluating differences, which is equally imperative and insightful in any analysis.

4. Identifying Differences

Identifying differences involves pinpointing the unique aspects, viewpoints or solutions introduced by the text you’re analyzing. How does it stand out as different from other texts?

To do this, you’ll need to compare this text to another text.

Differences can be revealed in:

- The potential applications of each idea

- The time, context, or place in which the elements were conceived or implemented

- The available evidence each element uses to support its ideas

- The perspectives of authors

- The conclusions reached

Identifying differences helps to reveal the multiplicity of perspectives and approaches on a given topic. Doing so provides a more in-depth, nuanced understanding of the field or issue you’re exploring.

This deeper understanding can greatly enhance your overall critique of the text you’re looking at. As such, learning to identify both similarities and differences is an essential skill for effective critical analysis.

My favorite tool for identifying similarities and differences is a Venn Diagram:

To use a venn diagram, title each circle for two different texts. Then, place similarities in the overlapping area of the circles, while unique characteristics (differences) of each text in the non-overlapping parts.

6. Identifying Oversights

Identifying oversights entails pointing out what the author missed, overlooked, or neglected in their work.

Almost every written work, no matter the expertise or meticulousness of the author, contains oversights. These omissions can be absent-minded mistakes or gaps in the argument, stemming from a lack of knowledge, foresight, or attentiveness.

Such gaps can be found in:

- Missed opportunities to counter or address opposing views

- Failure to consider certain relevant aspects or perspectives

- Incomplete or insufficient data that leaves the argument weak

- Failing to address potential criticism or counter-arguments

By shining a light on these weaknesses, you increase the depth and breadth of your critical analysis. It helps you to estimate the full worth of the text, understand its limitations, and contextualize it within the broader landscape of related work. Ultimately, noticing these oversights helps to make your analysis more balanced and considerate of the full complexity of the topic at hand.

You may notice here that identifying oversights requires you to already have a broad understanding and knowledge of the topic in the first place – so, study up!

7. Fact Checking

Fact-checking refers to the process of meticulously verifying the truth and accuracy of the data, statements, or claims put forward in a text.

Fact-checking serves as the bulwark against misinformation, bias, and unsubstantiated claims. It demands thorough research, resourcefulness, and a keen eye for detail.

Fact-checking goes beyond surface-level assertions:

- Examining the validity of the data given

- Cross-referencing information with other reliable sources

- Scrutinizing references, citations, and sources utilized in the article

- Distinguishing between opinion and objectively verifiable truths

- Checking for outdated, biased, or unbalanced information

If you identify factual errors, it’s vital to highlight them when critically analyzing the text. But remember, you could also (after careful scrutiny) also highlight that the text appears to be factually correct – that, too, is critical analysis.

8. Exploring Counterexamples

Exploring counterexamples involves searching and presenting instances or cases which contradict the arguments or conclusions presented in a text.

Counterexamples are an effective way to challenge the generalizations, assumptions or conclusions made in an article or theory. They can reveal weaknesses or oversights in the logic or validity of the author’s perspective.

Considerations in counterexample analysis are:

- Identifying generalizations made in the text

- Seeking examples in academic literature or real-world instances that contradict these generalizations

- Assessing the impact of these counterexamples on the validity of the text’s argument or conclusion

Exploring counterexamples enriches your critical analysis by injecting an extra layer of scrutiny, and even doubt, in the text.

By presenting counterexamples, you not only test the resilience and validity of the text but also open up new avenues of discussion and investigation that can further your understanding of the topic.

See Also: Counterargument Examples

9. Assessing Methodologies

Assessing methodologies entails examining the techniques, tools, or procedures employed by the author to collect, analyze and present their information.

The accuracy and validity of a text’s conclusions often depend on the credibility and appropriateness of the methodologies used.

Aspects to inspect include:

- The appropriateness of the research method for the research question

- The adequacy of the sample size

- The validity and reliability of data collection instruments

- The application of statistical tests and evaluations

- The implementation of controls to prevent bias or mitigate its impact

One strategy you could implement here is to consider a range of other methodologies the author could have used. If the author conducted interviews, consider questioning why they didn’t use broad surveys that could have presented more quantitative findings. If they only interviewed people with one perspective, consider questioning why they didn’t interview a wider variety of people, etc.

See Also: A List of Research Methodologies

10. Exploring Alternative Explanations

Exploring alternative explanations refers to the practice of proposing differing or opposing ideas to those put forward in the text.

An underlying assumption in any analysis is that there may be multiple valid perspectives on a single topic. The text you’re analyzing might provide one perspective, but your job is to bring into the light other reasonable explanations or interpretations.

Cultivating alternative explanations often involves:

- Formulating hypotheses or theories that differ from those presented in the text

- Referring to other established ideas or models that offer a differing viewpoint

- Suggesting a new or unique angle to interpret the data or phenomenon discussed in the text

Searching for alternative explanations challenges the authority of a singular narrative or perspective, fostering an environment ripe for intellectual discourse and critical thinking . It nudges you to examine the topic from multiple angles, enhancing your understanding and appreciation of the complexity inherent in the field.

A Full List of Critical Analysis Skills

- Exploring Strengths and Weaknesses

- Evaluating Sources

- Identifying Similarities

- Identifying Differences

- Identifying Biases

- Hypothesis Testing

- Fact-Checking

- Exploring Counterexamples

- Assessing Methodologies

- Exploring Alternative Explanations

- Pointing Out Contradictions

- Challenging the Significance

- Cause-And-Effect Analysis

- Assessing Generalizability

- Highlighting Inconsistencies

- Reductio ad Absurdum

- Comparing to Expert Testimony

- Comparing to Precedent

- Reframing the Argument

- Pointing Out Fallacies

- Questioning the Ethics

- Clarifying Definitions

- Challenging Assumptions

- Exposing Oversimplifications

- Highlighting Missing Information

- Demonstrating Irrelevance

- Assessing Effectiveness

- Assessing Trustworthiness

- Recognizing Patterns

- Differentiating Facts from Opinions

- Analyzing Perspectives

- Prioritization

- Making Predictions

- Conducting a SWOT Analysis

- PESTLE Analysis

- Asking the Five Whys

- Correlating Data Points

- Finding Anomalies Or Outliers

- Comparing to Expert Literature

- Drawing Inferences

- Assessing Validity & Reliability

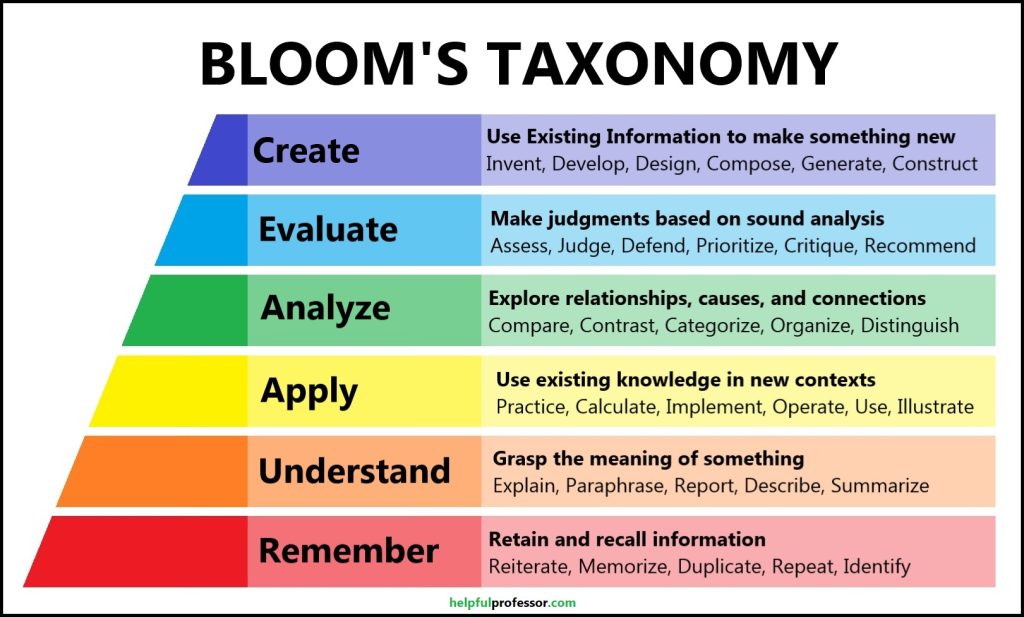

Analysis and Bloom’s Taxonomy

Benjamin Bloom placed analysis as the third-highest form of thinking on his ladder of cognitive skills called Bloom’s Taxonomy .

This taxonomy starts with the lowest levels of thinking – remembering and understanding. The further we go up the ladder, the more we reach higher-order thinking skills that demonstrate depth of understanding and knowledge, as outlined below:

Here’s a full outline of the taxonomy in a table format:

Chris Drew (PhD)

Dr. Chris Drew is the founder of the Helpful Professor. He holds a PhD in education and has published over 20 articles in scholarly journals. He is the former editor of the Journal of Learning Development in Higher Education. [Image Descriptor: Photo of Chris]

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 5 Top Tips for Succeeding at University

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 50 Durable Goods Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 100 Consumer Goods Examples

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 30 Globalization Pros and Cons

2 thoughts on “33 Critical Analysis Examples”

THANK YOU, THANK YOU, THANK YOU! – I cannot even being to explain how hard it has been to find a simple but in-depth understanding of what ‘Critical Analysis’ is. I have looked at over 10 different pages and went down so many rabbit holes but this is brilliant! I only skimmed through the article but it was already promising, I then went back and read it more in-depth, it just all clicked into place. So thank you again!

You’re welcome – so glad it was helpful.

Leave a Comment Cancel Reply

Your email address will not be published. Required fields are marked *

Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

- Explore content

- About the journal

- Publish with us

- Sign up for alerts

- Published: 31 January 2022

The fundamentals of critically appraising an article

- Sneha Chotaliya 1

BDJ Student volume 29 , pages 12–13 ( 2022 ) Cite this article

1955 Accesses

Metrics details

Sneha Chotaliya

We are often surrounded by an abundance of research and articles, but the quality and validity can vary massively. Not everything will be of a good quality - or even valid. An important part of reading a paper is first assessing the paper. This is a key skill for all healthcare professionals as anything we read can impact or influence our practice. It is also important to stay up to date with the latest research and findings.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

We are sorry, but there is no personal subscription option available for your country.

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Chambers R, 'Clinical Effectiveness Made Easy', Oxford: Radcliffe Medical Press , 1998

Loney P L, Chambers L W, Bennett K J, Roberts J G and Stratford P W. Critical appraisal of the health research literature: prevalence or incidence of a health problem. Chronic Dis Can 1998; 19 : 170-176.

Brice R. CASP CHECKLISTS - CASP - Critical Appraisal Skills Programme . 2021. Available at: https://casp-uk.net/casp-tools-checklists/ (Accessed 22 July 2021).

White S, Halter M, Hassenkamp A and Mein G. 2021. Critical Appraisal Techniques for Healthcare Literature . St George's, University of London.

Download references

Author information

Authors and affiliations.

Academic Foundation Dentist, London, UK

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Sneha Chotaliya .

Rights and permissions

Reprints and permissions

About this article

Cite this article.

Chotaliya, S. The fundamentals of critically appraising an article. BDJ Student 29 , 12–13 (2022). https://doi.org/10.1038/s41406-021-0275-6

Download citation

Published : 31 January 2022

Issue Date : 31 January 2022

DOI : https://doi.org/10.1038/s41406-021-0275-6

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

Quick links

- Explore articles by subject

- Guide to authors

- Editorial policies

- Mayo Clinic Libraries

- Systematic Reviews

- Critical Appraisal by Study Design

Systematic Reviews: Critical Appraisal by Study Design

- Knowledge Synthesis Comparison

- Knowledge Synthesis Decision Tree

- Standards & Reporting Results

- Materials in the Mayo Clinic Libraries

- Training Resources

- Review Teams

- Develop & Refine Your Research Question

- Develop a Timeline

- Project Management

- Communication

- PRISMA-P Checklist

- Eligibility Criteria

- Register your Protocol

- Other Resources

- Other Screening Tools

- Grey Literature Searching

- Citation Searching

- Data Extraction Tools

- Minimize Bias

- Synthesis & Meta-Analysis

- Publishing your Systematic Review

Tools for Critical Appraisal of Studies

“The purpose of critical appraisal is to determine the scientific merit of a research report and its applicability to clinical decision making.” 1 Conducting a critical appraisal of a study is imperative to any well executed evidence review, but the process can be time consuming and difficult. 2 The critical appraisal process requires “a methodological approach coupled with the right tools and skills to match these methods is essential for finding meaningful results.” 3 In short, it is a method of differentiating good research from bad research.

Critical Appraisal by Study Design (featured tools)

- Non-RCTs or Observational Studies

- Diagnostic Accuracy

- Animal Studies

- Qualitative Research

- Tool Repository

- AMSTAR 2 The original AMSTAR was developed to assess the risk of bias in systematic reviews that included only randomized controlled trials. AMSTAR 2 was published in 2017 and allows researchers to “identify high quality systematic reviews, including those based on non-randomised studies of healthcare interventions.” 4 more... less... AMSTAR 2 (A MeaSurement Tool to Assess systematic Reviews)

- ROBIS ROBIS is a tool designed specifically to assess the risk of bias in systematic reviews. “The tool is completed in three phases: (1) assess relevance(optional), (2) identify concerns with the review process, and (3) judge risk of bias in the review. Signaling questions are included to help assess specific concerns about potential biases with the review.” 5 more... less... ROBIS (Risk of Bias in Systematic Reviews)

- BMJ Framework for Assessing Systematic Reviews This framework provides a checklist that is used to evaluate the quality of a systematic review.

- CASP Checklist for Systematic Reviews This CASP checklist is not a scoring system, but rather a method of appraising systematic reviews by considering: 1. Are the results of the study valid? 2. What are the results? 3. Will the results help locally? more... less... CASP (Critical Appraisal Skills Programme)

- CEBM Systematic Reviews Critical Appraisal Sheet The CEBM’s critical appraisal sheets are designed to help you appraise the reliability, importance, and applicability of clinical evidence. more... less... CEBM (Centre for Evidence-Based Medicine)

- JBI Critical Appraisal Tools, Checklist for Systematic Reviews JBI Critical Appraisal Tools help you assess the methodological quality of a study and to determine the extent to which study has addressed the possibility of bias in its design, conduct and analysis.

- NHLBI Study Quality Assessment of Systematic Reviews and Meta-Analyses The NHLBI’s quality assessment tools were designed to assist reviewers in focusing on concepts that are key for critical appraisal of the internal validity of a study. more... less... NHLBI (National Heart, Lung, and Blood Institute)

- RoB 2 RoB 2 “provides a framework for assessing the risk of bias in a single estimate of an intervention effect reported from a randomized trial,” rather than the entire trial. 6 more... less... RoB 2 (revised tool to assess Risk of Bias in randomized trials)

- CASP Randomised Controlled Trials Checklist This CASP checklist considers various aspects of an RCT that require critical appraisal: 1. Is the basic study design valid for a randomized controlled trial? 2. Was the study methodologically sound? 3. What are the results? 4. Will the results help locally? more... less... CASP (Critical Appraisal Skills Programme)

- CONSORT Statement The CONSORT checklist includes 25 items to determine the quality of randomized controlled trials. “Critical appraisal of the quality of clinical trials is possible only if the design, conduct, and analysis of RCTs are thoroughly and accurately described in the report.” 7 more... less... CONSORT (Consolidated Standards of Reporting Trials)

- NHLBI Study Quality Assessment of Controlled Intervention Studies The NHLBI’s quality assessment tools were designed to assist reviewers in focusing on concepts that are key for critical appraisal of the internal validity of a study. more... less... NHLBI (National Heart, Lung, and Blood Institute)

- JBI Critical Appraisal Tools Checklist for Randomized Controlled Trials JBI Critical Appraisal Tools help you assess the methodological quality of a study and to determine the extent to which study has addressed the possibility of bias in its design, conduct and analysis.

- ROBINS-I ROBINS-I is a “tool for evaluating risk of bias in estimates of the comparative effectiveness… of interventions from studies that did not use randomization to allocate units… to comparison groups.” 8 more... less... ROBINS-I (Risk Of Bias in Non-randomized Studies – of Interventions)

- NOS This tool is used primarily to evaluate and appraise case-control or cohort studies. more... less... NOS (Newcastle-Ottawa Scale)

- AXIS Cross-sectional studies are frequently used as an evidence base for diagnostic testing, risk factors for disease, and prevalence studies. “The AXIS tool focuses mainly on the presented [study] methods and results.” 9 more... less... AXIS (Appraisal tool for Cross-Sectional Studies)

- NHLBI Study Quality Assessment Tools for Non-Randomized Studies The NHLBI’s quality assessment tools were designed to assist reviewers in focusing on concepts that are key for critical appraisal of the internal validity of a study. • Quality Assessment Tool for Observational Cohort and Cross-Sectional Studies • Quality Assessment of Case-Control Studies • Quality Assessment Tool for Before-After (Pre-Post) Studies With No Control Group • Quality Assessment Tool for Case Series Studies more... less... NHLBI (National Heart, Lung, and Blood Institute)

- Case Series Studies Quality Appraisal Checklist Developed by the Institute of Health Economics (Canada), the checklist is comprised of 20 questions to assess “the robustness of the evidence of uncontrolled, [case series] studies.” 10

- Methodological Quality and Synthesis of Case Series and Case Reports In this paper, Dr. Murad and colleagues “present a framework for appraisal, synthesis and application of evidence derived from case reports and case series.” 11

- MINORS The MINORS instrument contains 12 items and was developed for evaluating the quality of observational or non-randomized studies. 12 This tool may be of particular interest to researchers who would like to critically appraise surgical studies. more... less... MINORS (Methodological Index for Non-Randomized Studies)

- JBI Critical Appraisal Tools for Non-Randomized Trials JBI Critical Appraisal Tools help you assess the methodological quality of a study and to determine the extent to which study has addressed the possibility of bias in its design, conduct and analysis. • Checklist for Analytical Cross Sectional Studies • Checklist for Case Control Studies • Checklist for Case Reports • Checklist for Case Series • Checklist for Cohort Studies

- QUADAS-2 The QUADAS-2 tool “is designed to assess the quality of primary diagnostic accuracy studies… [it] consists of 4 key domains that discuss patient selection, index test, reference standard, and flow of patients through the study and timing of the index tests and reference standard.” 13 more... less... QUADAS-2 (a revised tool for the Quality Assessment of Diagnostic Accuracy Studies)

- JBI Critical Appraisal Tools Checklist for Diagnostic Test Accuracy Studies JBI Critical Appraisal Tools help you assess the methodological quality of a study and to determine the extent to which study has addressed the possibility of bias in its design, conduct and analysis.

- STARD 2015 The authors of the standards note that “[e]ssential elements of [diagnostic accuracy] study methods are often poorly described and sometimes completely omitted, making both critical appraisal and replication difficult, if not impossible.”10 The Standards for the Reporting of Diagnostic Accuracy Studies was developed “to help… improve completeness and transparency in reporting of diagnostic accuracy studies.” 14 more... less... STARD 2015 (Standards for the Reporting of Diagnostic Accuracy Studies)

- CASP Diagnostic Study Checklist This CASP checklist considers various aspects of diagnostic test studies including: 1. Are the results of the study valid? 2. What were the results? 3. Will the results help locally? more... less... CASP (Critical Appraisal Skills Programme)

- CEBM Diagnostic Critical Appraisal Sheet The CEBM’s critical appraisal sheets are designed to help you appraise the reliability, importance, and applicability of clinical evidence. more... less... CEBM (Centre for Evidence-Based Medicine)

- SYRCLE’s RoB “[I]mplementation of [SYRCLE’s RoB tool] will facilitate and improve critical appraisal of evidence from animal studies. This may… enhance the efficiency of translating animal research into clinical practice and increase awareness of the necessity of improving the methodological quality of animal studies.” 15 more... less... SYRCLE’s RoB (SYstematic Review Center for Laboratory animal Experimentation’s Risk of Bias)

- ARRIVE 2.0 “The [ARRIVE 2.0] guidelines are a checklist of information to include in a manuscript to ensure that publications [on in vivo animal studies] contain enough information to add to the knowledge base.” 16 more... less... ARRIVE 2.0 (Animal Research: Reporting of In Vivo Experiments)

- Critical Appraisal of Studies Using Laboratory Animal Models This article provides “an approach to critically appraising papers based on the results of laboratory animal experiments,” and discusses various “bias domains” in the literature that critical appraisal can identify. 17

- CEBM Critical Appraisal of Qualitative Studies Sheet The CEBM’s critical appraisal sheets are designed to help you appraise the reliability, importance and applicability of clinical evidence. more... less... CEBM (Centre for Evidence-Based Medicine)

- CASP Qualitative Studies Checklist This CASP checklist considers various aspects of qualitative research studies including: 1. Are the results of the study valid? 2. What were the results? 3. Will the results help locally? more... less... CASP (Critical Appraisal Skills Programme)

- Quality Assessment and Risk of Bias Tool Repository Created by librarians at Duke University, this extensive listing contains over 100 commonly used risk of bias tools that may be sorted by study type.

- Latitudes Network A library of risk of bias tools for use in evidence syntheses that provides selection help and training videos.

References & Recommended Reading

1. Kolaski, K., Logan, L. R., & Ioannidis, J. P. (2024). Guidance to best tools and practices for systematic reviews . British Journal of Pharmacology , 181 (1), 180-210

2. Portney LG. Foundations of clinical research : applications to evidence-based practice. Fourth edition. ed. Philadelphia: F A Davis; 2020.

3. Fowkes FG, Fulton PM. Critical appraisal of published research: introductory guidelines. BMJ (Clinical research ed). 1991;302(6785):1136-1140.

4. Singh S. Critical appraisal skills programme. Journal of Pharmacology and Pharmacotherapeutics. 2013;4(1):76-77.

5. Shea BJ, Reeves BC, Wells G, et al. AMSTAR 2: a critical appraisal tool for systematic reviews that include randomised or non-randomised studies of healthcare interventions, or both. BMJ (Clinical research ed). 2017;358:j4008.

6. Whiting P, Savovic J, Higgins JPT, et al. ROBIS: A new tool to assess risk of bias in systematic reviews was developed. Journal of clinical epidemiology. 2016;69:225-234.

7. Sterne JAC, Savovic J, Page MJ, et al. RoB 2: a revised tool for assessing risk of bias in randomised trials. BMJ (Clinical research ed). 2019;366:l4898.

8. Moher D, Hopewell S, Schulz KF, et al. CONSORT 2010 Explanation and Elaboration: Updated guidelines for reporting parallel group randomised trials. Journal of clinical epidemiology. 2010;63(8):e1-37.

9. Sterne JA, Hernan MA, Reeves BC, et al. ROBINS-I: a tool for assessing risk of bias in non-randomised studies of interventions. BMJ (Clinical research ed). 2016;355:i4919.

10. Downes MJ, Brennan ML, Williams HC, Dean RS. Development of a critical appraisal tool to assess the quality of cross-sectional studies (AXIS). BMJ open. 2016;6(12):e011458.

11. Guo B, Moga C, Harstall C, Schopflocher D. A principal component analysis is conducted for a case series quality appraisal checklist. Journal of clinical epidemiology. 2016;69:199-207.e192.

12. Murad MH, Sultan S, Haffar S, Bazerbachi F. Methodological quality and synthesis of case series and case reports. BMJ evidence-based medicine. 2018;23(2):60-63.

13. Slim K, Nini E, Forestier D, Kwiatkowski F, Panis Y, Chipponi J. Methodological index for non-randomized studies (MINORS): development and validation of a new instrument. ANZ journal of surgery. 2003;73(9):712-716.

14. Whiting PF, Rutjes AWS, Westwood ME, et al. QUADAS-2: a revised tool for the quality assessment of diagnostic accuracy studies. Annals of internal medicine. 2011;155(8):529-536.

15. Bossuyt PM, Reitsma JB, Bruns DE, et al. STARD 2015: an updated list of essential items for reporting diagnostic accuracy studies. BMJ (Clinical research ed). 2015;351:h5527.

16. Hooijmans CR, Rovers MM, de Vries RBM, Leenaars M, Ritskes-Hoitinga M, Langendam MW. SYRCLE's risk of bias tool for animal studies. BMC medical research methodology. 2014;14:43.

17. Percie du Sert N, Ahluwalia A, Alam S, et al. Reporting animal research: Explanation and elaboration for the ARRIVE guidelines 2.0. PLoS biology. 2020;18(7):e3000411.

18. O'Connor AM, Sargeant JM. Critical appraisal of studies using laboratory animal models. ILAR journal. 2014;55(3):405-417.

- << Previous: Minimize Bias

- Next: GRADE >>

- Last Updated: Apr 12, 2024 9:46 AM

- URL: https://libraryguides.mayo.edu/systematicreviewprocess

- Teesside University Student & Library Services

- Learning Hub Group

Critical Appraisal for Health Students

- Critical Appraisal of a quantitative paper

- Critical Appraisal: Help

- Critical Appraisal of a qualitative paper

- Useful resources

Appraisal of a Quantitative paper: Top tips

- Introduction

Critical appraisal of a quantitative paper (RCT)

This guide, aimed at health students, provides basic level support for appraising quantitative research papers. It's designed for students who have already attended lectures on critical appraisal. One framework for appraising quantitative research (based on reliability, internal and external validity) is provided and there is an opportunity to practise the technique on a sample article.

Please note this framework is for appraising one particular type of quantitative research a Randomised Controlled Trial (RCT) which is defined as

a trial in which participants are randomly assigned to one of two or more groups: the experimental group or groups receive the intervention or interventions being tested; the comparison group (control group) receive usual care or no treatment or a placebo. The groups are then followed up to see if there are any differences between the results. This helps in assessing the effectiveness of the intervention.(CASP, 2020)

Support materials

- Framework for reading quantitative papers (RCTs)

- Critical appraisal of a quantitative paper PowerPoint

To practise following this framework for critically appraising a quantitative article, please look at the following article:

Marrero, D.G. et al (2016) 'Comparison of commercial and self-initiated weight loss programs in people with prediabetes: a randomized control trial', AJPH Research , 106(5), pp. 949-956.

Critical Appraisal of a quantitative paper (RCT): practical example

- Internal Validity

- External Validity

- Reliability Measurement Tool

How to use this practical example

Using the framework, you can have a go at appraising a quantitative paper - we are going to look at the following article:

Marrero, d.g. et al (2016) 'comparison of commercial and self-initiated weight loss programs in people with prediabetes: a randomized control trial', ajph research , 106(5), pp. 949-956., step 1. take a quick look at the article, step 2. click on the internal validity tab above - there are questions to help you appraise the article, read the questions and look for the answers in the article. , step 3. click on each question and our answers will appear., step 4. repeat with the other aspects of external validity and reliability. , questioning the internal validity:, randomisation : how were participants allocated to each group did a randomisation process taken place, comparability of groups: how similar were the groups eg age, sex, ethnicity – is this made clear, blinding (none, single, double or triple): who was not aware of which group a patient was in (eg nobody, only patient, patient and clinician, patient, clinician and researcher) was it feasible for more blinding to have taken place , equal treatment of groups: were both groups treated in the same way , attrition : what percentage of participants dropped out did this adversely affect one group has this been evaluated, overall internal validity: does the research measure what it is supposed to be measuring, questioning the external validity:, attrition: was everyone accounted for at the end of the study was any attempt made to contact drop-outs, sampling approach: how was the sample selected was it based on probability or non-probability what was the approach (eg simple random, convenience) was this an appropriate approach, sample size (power calculation): how many participants was a sample size calculation performed did the study pass, exclusion/ inclusion criteria: were the criteria set out clearly were they based on recognised diagnostic criteria, what is the overall external validity can the results be applied to the wider population, questioning the reliability (measurement tool) internal validity:, internal consistency reliability (cronbach’s alpha). has a cronbach’s alpha score of 0.7 or above been included, test re-test reliability correlation. was the test repeated more than once were the same results received has a correlation coefficient been reported is it above 0.7 , validity of measurement tool. is it an established tool if not what has been done to check if it is reliable pilot study expert panel literature review criterion validity (test against other tools): has a criterion validity comparison been carried out was the score above 0.7, what is the overall reliability how consistent are the measurements , overall validity and reliability:, overall how valid and reliable is the paper.

- << Previous: Critical Appraisal of a qualitative paper

- Next: Useful resources >>

- Last Updated: Aug 25, 2023 2:48 PM

- URL: https://libguides.tees.ac.uk/critical_appraisal

The Critical Appraisal of the Article

Introduction, critical appraisal, relevance of the study, validity of the study, results of the study, strengths and limitations of the study, recommendations, reference list.

Critical appraisal is an important factor to determine the relevance, validity, and transparency of the research. This paper presents the critical appraisal of the article ‘Light drinking in pregnancy, a risk for behavioral problems and cognitive deficits at 3 years of age’ with special focus on the relevance of this article, validity of the article, and validity of the result of the research in the article.

It is a critical appraisal of the article ‘Light drinking in pregnancy, a risk for behavioral problems and cognitive deficits at 3 years of age’ which was published by Oxford University Press on behalf of the International Epidemiological Association. “Critical appraisal is the process of systematically examining research evidence to assess its validity, relevance and results before using it to inform a decision.” (Abdel-Ghaffar, n.d., p.12). Critical appraisal is an important part of the evidence-based clinical practice to assess the validity of the research before going to implement the results of the study.

Researches have shown that heavy drinking during pregnancy affects children in their cognitive and behavioral development. But, there prevails ignorance on whether light drinking of the pregnant lady will affect the fetus. The objective of the study is to assess whether there is any behavioral problem and cognitive deficits among the children of those who drink lightly during pregnancy. It is a relevant subject for the time being. There are strong debates throughout the world that emphasizes the side effects of using alcohol by pregnant ladies. The result of the study shows that children born to mothers who drink lightly during pregnancy do not inflict the problem of behavioral disorders and cognitive deficits. But, the research reveals that children of heavy drinking mothers during pregnancy expose to different health issues. The result of the study can be taken for public information since there lacks of knowledge on the problem.

This study used the Millennium Cohort study which is a longitudinal study of infants who are born in the United Kingdom and the sample was taken from England, Wales, Scotland, and Northern Island. Interviews and home visits were the two methods used in the assessment. Three questionnaires were used, namely, Strengths and Difficulties Questionnaire (SDQ) to assess the behavioral problems and British Ability Scale (BAS) and the Bracken School Readiness Assessment (BSRA) to assess the cognitive deficits of the children. The questions in the interview focused on the socio-economic situation, health problems, and drinking during pregnancy. The study addressed the real problem and achieved its objectives. Interviews were conducted by experts. There were two steps in the study and the first part of the survey was conducted when the cohort members were aged 9 months and the second part of the survey was conducted when they became three years. Therefore, they completed the follow-up accurately.

The findings answer the research objective that drinking lightly during pregnancy will not lead to the behavioral problems and cognitive deficits of the children. The result is very significant and precise to the objective of the study. It was noticeable that the J-shaped relationship between drinking during pregnancy and scores obtained by the children. There were no variations between the results of abstinent mothers and light drinkers at the time of pregnancy.

In this study, two-third of the mothers were those who include in the category of abstinence, twenty-nine percent were light drinkers, six percent were moderate drinkers and two percent were heavy drinkers.

“The data used in our study were from a large nationally representative sample of young children that were collected prospectively. However, the Millennium Cohort Study sample is not representative of all pregnancies or births and so data on miscarriages, stillbirths, and neonatal deaths were not included.” (Kelly, Y., Sacker, Gray, Kelly, J., Wolke & Quigley, 2008, p.6). This study unravels the widespread alcohol consumption of pregnant women even though there is a social stigma. The main drawbacks in the study are when there is a stigma about the consumption of alcohol, people will be reluctant to be open about and it is very difficult to give correct measurements for the light drinkers. It cannot be defined accurately what amount is regarded as a light drink, and therefore, it may be hard to restrict the problem to a questionnaire. There may be some other causes for the behavioral problem of children other than the consumption of alcohol. Therefore, the factors like genetic make-up, social determinants such as financial condition; family background, etc also should be taken into consideration and should be assessed very systematically and scientifically.

Pregnant women may probably be loathed to reveal about the intake of alcohol since there exists stigma in society. Therefore the use of a questionnaire would be inappropriate to scribe the responses of the clients. There is vagueness in many of the terms used in the study. Some concepts are beyond the actual definition. For example, light drinking cannot be defined objectively and it varies from person to person. Social drinkers can be categorized as light drinkers but the question is up to what level and quantity. Therefore the responses of the client cannot be limited to some of the questions paused in the questionnaire. To get accurate data for the study and to get the pulse of the clients, it is better to use in-depth interviews and observant participation methods. The quantitative nature of the study may hamper accurate results and thereby reliability. The next flaw of this study is that it had two sweeps. The first had conducted when the children were at nine years of old and the second sweep was conducted when the children were at three years of old. These two sweeps of the study cannot bring effective and reliable information on how the slight drinking of mothers affects the children since there are other leading factors to the development of cognitive and behavioral defects. If the study is conducted using the interview method, the researcher can include the questions according to the changing perceptions of the social norms. When the social norms are being changed in time, the questionnaire which is developed years back will not be apt at the time of the study. Therefore what I would suggest is that it could have been made better and the result could have more been reliable if the study had been conducted qualitatively.

The consumption of alcohol by pregnant women is considered a risk factor for the physical, mental, and cognitive growth of children. Public people have not received accurate information on this issue. The above-referred article shows the outcome of the research on whether the light drinking habit of the mother hampers the development of the fetus in her womb. This paper substantiates the fact that slight drinking of pregnant women will not affect the cognitive and behavioral disorders of the children. The study is needed of the time and it is valid to provide current information on the problem. At the same time, it has underestimated the result of the study being used by questionnaire and quantitative study.

Abdel-Ghaffar, S. (n.d.). Critical appraisal: An overview: What is critical appraisal?. Faculty of Medicine, Cairo University. Web.

Kelly, Y., Sacker, A., Gray, R., Kelly, J., Wolke, D., & Quigley, M A. (2008). Light drinking in pregnancy, a risk for behavioral problems and cognitive deficits at 3 years of age: Strengths and limitations of the study. International Journal of Epidemiology , 1-12. Oxford University Press.

Cite this paper

- Chicago (N-B)

- Chicago (A-D)

StudyCorgi. (2022, February 17). The Critical Appraisal of the Article. https://studycorgi.com/the-critical-appraisal-of-the-article/

"The Critical Appraisal of the Article." StudyCorgi , 17 Feb. 2022, studycorgi.com/the-critical-appraisal-of-the-article/.

StudyCorgi . (2022) 'The Critical Appraisal of the Article'. 17 February.

1. StudyCorgi . "The Critical Appraisal of the Article." February 17, 2022. https://studycorgi.com/the-critical-appraisal-of-the-article/.

Bibliography

StudyCorgi . "The Critical Appraisal of the Article." February 17, 2022. https://studycorgi.com/the-critical-appraisal-of-the-article/.

StudyCorgi . 2022. "The Critical Appraisal of the Article." February 17, 2022. https://studycorgi.com/the-critical-appraisal-of-the-article/.

This paper, “The Critical Appraisal of the Article”, was written and voluntary submitted to our free essay database by a straight-A student. Please ensure you properly reference the paper if you're using it to write your assignment.

Before publication, the StudyCorgi editorial team proofread and checked the paper to make sure it meets the highest standards in terms of grammar, punctuation, style, fact accuracy, copyright issues, and inclusive language. Last updated: November 10, 2023 .

If you are the author of this paper and no longer wish to have it published on StudyCorgi, request the removal . Please use the “ Donate your paper ” form to submit an essay.

What Is a Critical Analysis Essay: Definition

Have you ever had to read a book or watch a movie for school and then write an essay about it? Well, a critical analysis essay is a type of essay where you do just that! So, when wondering what is a critical analysis essay, know that it's a fancy way of saying that you're going to take a closer look at something and analyze it.

So, let's say you're assigned to read a novel for your literature class. A critical analysis essay would require you to examine the characters, plot, themes, and writing style of the book. You would need to evaluate its strengths and weaknesses and provide your own thoughts and opinions on the text.

Similarly, if you're tasked with writing a critical analysis essay on a scientific article, you would need to analyze the methodology, results, and conclusions presented in the article and evaluate its significance and potential impact on the field.

The key to a successful critical analysis essay is to approach the subject matter with an open mind and a willingness to engage with it on a deeper level. By doing so, you can gain a greater appreciation and understanding of the subject matter and develop your own informed opinions and perspectives. Considering this, we bet you want to learn how to write critical analysis essay easily and efficiently, so keep on reading to find out more!

Meanwhile, if you'd rather have your own sample critical analysis essay crafted by professionals from our custom writings , contact us to buy essays online .

Need a CRITICAL ANALYSIS Essay Written?

Simply pick a topic, send us your requirements and choose a writer. That’s all we need to write you an original paper.

Critical Analysis Essay Topics by Category

If you're looking for an interesting and thought-provoking topic for your critical analysis essay, you've come to the right place! Critical analysis essays can cover many subjects and topics, with endless possibilities. To help you get started, we've compiled a list of critical analysis essay topics by category. We've got you covered whether you're interested in literature, science, social issues, or something else. So, grab a notebook and pen, and get ready to dive deep into your chosen topic. In the following sections, we will provide you with various good critical analysis paper topics to choose from, each with its unique angle and approach.

Critical Analysis Essay Topics on Mass Media

From television and radio to social media and advertising, mass media is everywhere, shaping our perceptions of the world around us. As a result, it's no surprise that critical analysis essays on mass media are a popular choice for students and scholars alike. To help you get started, here are ten critical essay example topics on mass media:

- The Influence of Viral Memes on Pop Culture: An In-Depth Analysis.

- The Portrayal of Mental Health in Television: Examining Stigmatization and Advocacy.

- The Power of Satirical News Shows: Analyzing the Impact of Political Commentary.

- Mass Media and Consumer Behavior: Investigating Advertising and Persuasion Techniques.

- The Ethics of Deepfake Technology: Implications for Trust and Authenticity in Media.

- Media Framing and Public Perception: A Critical Analysis of News Coverage.

- The Role of Social Media in Shaping Political Discourse and Activism.

- Fake News in the Digital Age: Identifying Disinformation and Its Effects.

- The Representation of Gender and Diversity in Hollywood Films: A Critical Examination.

- Media Ownership and Its Impact on Journalism and News Reporting: A Comprehensive Study.

Critical Analysis Essay Topics on Sports

Sports are a ubiquitous aspect of our culture, and they have the power to unite and inspire people from all walks of life. Whether you're an athlete, a fan, or just someone who appreciates the beauty of competition, there's no denying the significance of sports in our society. If you're looking for an engaging and thought-provoking topic for your critical analysis essay, sports offer a wealth of possibilities:

- The Role of Sports in Diplomacy: Examining International Relations Through Athletic Events.

- Sports and Identity: How Athletic Success Shapes National and Cultural Pride.

- The Business of Sports: Analyzing the Economics and Commercialization of Athletics.

- Athlete Activism: Exploring the Impact of Athletes' Social and Political Engagement.

- Sports Fandom and Online Communities: The Impact of Social Media on Fan Engagement.

- The Representation of Athletes in the Media: Gender, Race, and Stereotypes.

- The Psychology of Sports: Exploring Mental Toughness, Motivation, and Peak Performance.

- The Evolution of Sports Equipment and Technology: From Innovation to Regulation.

- The Legacy of Sports Legends: Analyzing Their Impact Beyond Athletic Achievement.

- Sports and Social Change: How Athletic Movements Shape Societal Attitudes and Policies.

Critical Analysis Essay Topics on Literature and Arts

Literature and arts can inspire, challenge, and transform our perceptions of the world around us. From classic novels to contemporary art, the realm of literature and arts offers many possibilities for critical analysis essays. Here are ten original critic essay example topics on literature and arts:

- The Use of Symbolism in Contemporary Poetry: Analyzing Hidden Meanings and Significance.

- The Intersection of Art and Identity: How Self-Expression Shapes Artists' Works.

- The Role of Nonlinear Narrative in Postmodern Novels: Techniques and Interpretation.

- The Influence of Jazz on African American Literature: A Comparative Study.

- The Complexity of Visual Storytelling: Graphic Novels and Their Narrative Power.

- The Art of Literary Translation: Challenges, Impact, and Interpretation.

- The Evolution of Music Videos: From Promotional Tools to a Unique Art Form.

- The Literary Techniques of Magical Realism: Exploring Reality and Fantasy.

- The Impact of Visual Arts in Advertising: Analyzing the Connection Between Art and Commerce.

- Art in Times of Crisis: How Artists Respond to Societal and Political Challenges.

Critical Analysis Essay Topics on Culture

Culture is a dynamic and multifaceted aspect of our society, encompassing everything from language and religion to art and music. As a result, there are countless possibilities for critical analysis essays on culture. Whether you're interested in exploring the complexities of globalization or delving into the nuances of cultural identity, there's a wealth of topics to choose from:

- The Influence of K-Pop on Global Youth Culture: A Comparative Study.

- Cultural Significance of Street Art in Urban Spaces: Beyond Vandalism.

- The Role of Mythology in Shaping Indigenous Cultures and Belief Systems.

- Nollywood: Analyzing the Cultural Impact of Nigerian Cinema on the African Diaspora.

- The Language of Hip-Hop Lyrics: A Semiotic Analysis of Cultural Expression.

- Digital Nomads and Cultural Adaptation: Examining the Subculture of Remote Work.

- The Cultural Significance of Tattooing Among Indigenous Tribes in Oceania.

- The Art of Culinary Fusion: Analyzing Cross-Cultural Food Trends and Innovation.

- The Impact of Cultural Festivals on Local Identity and Economy.

- The Influence of Internet Memes on Language and Cultural Evolution.

How to Write a Critical Analysis: Easy Steps

When wondering how to write a critical analysis essay, remember that it can be a challenging but rewarding process. Crafting a critical analysis example requires a careful and thoughtful examination of a text or artwork to assess its strengths and weaknesses and broader implications. The key to success is to approach the task in a systematic and organized manner, breaking it down into two distinct steps: critical reading and critical writing. Here are some tips for each step of the process to help you write a critical essay.

Step 1: Critical Reading

Here are some tips for critical reading that can help you with your critical analysis paper:

- Read actively : Don't just read the text passively, but actively engage with it by highlighting or underlining important points, taking notes, and asking questions.

- Identify the author's main argument: Figure out what the author is trying to say and what evidence they use to support their argument.

- Evaluate the evidence: Determine whether the evidence is reliable, relevant, and sufficient to support the author's argument.

- Analyze the author's tone and style: Consider the author's tone and style and how it affects the reader's interpretation of the text.

- Identify assumptions: Identify any underlying assumptions the author makes and consider whether they are valid or questionable.

- Consider alternative perspectives: Consider alternative perspectives or interpretations of the text and consider how they might affect the author's argument.

- Assess the author's credibility : Evaluate the author's credibility by considering their expertise, biases, and motivations.

- Consider the context: Consider the historical, social, cultural, and political context in which the text was written and how it affects its meaning.

- Pay attention to language: Pay attention to the author's language, including metaphors, symbolism, and other literary devices.

- Synthesize your analysis: Use your analysis of the text to develop a well-supported argument in your critical analysis essay.

Step 2: Critical Analysis Writing

Here are some tips for critical analysis writing, with examples:

- Start with a strong thesis statement: A strong critical analysis thesis is the foundation of any critical analysis essay. It should clearly state your argument or interpretation of the text. You can also consult us on how to write a thesis statement . Meanwhile, here is a clear example:

- Weak thesis statement: 'The author of this article is wrong.'

- Strong thesis statement: 'In this article, the author's argument fails to consider the socio-economic factors that contributed to the issue, rendering their analysis incomplete.'

- Use evidence to support your argument: Use evidence from the text to support your thesis statement, and make sure to explain how the evidence supports your argument. For example:

- Weak argument: 'The author of this article is biased.'

- Strong argument: 'The author's use of emotional language and selective evidence suggests a bias towards one particular viewpoint, as they fail to consider counterarguments and present a balanced analysis.'

- Analyze the evidence : Analyze the evidence you use by considering its relevance, reliability, and sufficiency. For example:

- Weak analysis: 'The author mentions statistics in their argument.'

- Strong analysis: 'The author uses statistics to support their argument, but it is important to note that these statistics are outdated and do not take into account recent developments in the field.'

- Use quotes and paraphrases effectively: Use quotes and paraphrases to support your argument and properly cite your sources. For example:

- Weak use of quotes: 'The author said, 'This is important.'

- Strong use of quotes: 'As the author points out, 'This issue is of utmost importance in shaping our understanding of the problem' (p. 25).'

- Use clear and concise language: Use clear and concise language to make your argument easy to understand, and avoid jargon or overly complicated language. For example:

- Weak language: 'The author's rhetorical devices obfuscate the issue.'

- Strong language: 'The author's use of rhetorical devices such as metaphor and hyperbole obscures the key issues at play.'

- Address counterarguments: Address potential counterarguments to your argument and explain why your interpretation is more convincing. For example:

- Weak argument: 'The author is wrong because they did not consider X.'

- Strong argument: 'While the author's analysis is thorough, it overlooks the role of X in shaping the issue. However, by considering this factor, a more nuanced understanding of the problem emerges.'

- Consider the audience: Consider your audience during your writing process. Your language and tone should be appropriate for your audience and should reflect the level of knowledge they have about the topic. For example:

- Weak language: 'As any knowledgeable reader can see, the author's argument is flawed.'

- Strong language: 'Through a critical analysis of the author's argument, it becomes clear that there are gaps in their analysis that require further consideration.'

Master the art of critical analysis with EssayPro . Our team is ready to guide you in dissecting texts, theories, or artworks with depth and sophistication. Let us help you deliver a critical analysis essay that showcases your analytical prowess.

Creating a Detailed Critical Analysis Essay Outline

Creating a detailed outline is essential when writing a critical analysis essay. It helps you organize your thoughts and arguments, ensuring your essay flows logically and coherently. Here is a detailed critical analysis outline from our dissertation writers :

I. Introduction

A. Background information about the text and its author

B. Brief summary of the text

C. Thesis statement that clearly states your argument

II. Analysis of the Text

A. Overview of the text's main themes and ideas

B. Examination of the author's writing style and techniques

C. Analysis of the text's structure and organization

III. Evaluation of the Text

A. Evaluation of the author's argument and evidence

B. Analysis of the author's use of language and rhetorical strategies

C. Assessment of the text's effectiveness and relevance to the topic

IV. Discussion of the Context

A. Exploration of the historical, cultural, and social context of the text

B. Examination of the text's influence on its audience and society

C. Analysis of the text's significance and relevance to the present day

V. Counter Arguments and Responses

A. Identification of potential counterarguments to your argument

B. Refutation of counterarguments and defense of your position

C. Acknowledgement of the limitations and weaknesses of your argument

VI. Conclusion

A. Recap of your argument and main points

B. Evaluation of the text's significance and relevance

C. Final thoughts and recommendations for further research or analysis.

This outline can be adjusted to fit the specific requirements of your essay. Still, it should give you a solid foundation for creating a detailed and well-organized critical analysis essay.

Useful Techniques Used in Literary Criticism

There are several techniques used in literary criticism to analyze and evaluate a work of literature. Here are some of the most common techniques:

- Close reading: This technique involves carefully analyzing a text to identify its literary devices, themes, and meanings.

- Historical and cultural context: This technique involves examining the historical and cultural context of a work of literature to understand the social, political, and cultural influences that shaped it.

- Structural analysis: This technique involves analyzing the structure of a text, including its plot, characters, and narrative techniques, to identify patterns and themes.

- Formalism: This technique focuses on the literary elements of a text, such as its language, imagery, and symbolism, to analyze its meaning and significance.

- Psychological analysis: This technique examines the psychological and emotional aspects of a text, including the motivations and desires of its characters, to understand the deeper meanings and themes.

- Feminist and gender analysis: This technique focuses on the representation of gender and sexuality in a text, including how gender roles and stereotypes are reinforced or challenged.

- Marxist and social analysis: This technique examines the social and economic structures portrayed in a text, including issues of class, power, and inequality.

By using these and other techniques, literary critics can offer insightful and nuanced analyses of works of literature, helping readers to understand and appreciate the complexity and richness of the texts.

Sample Critical Analysis Essay

Now that you know how to write a critical analysis, take a look at the critical analysis essay sample provided by our research paper writers and better understand this kind of paper!

Final Words

At our professional writing services, we understand the challenges and pressures that students face regarding academic writing. That's why we offer high-quality, custom-written essays designed to meet each student's specific needs and requirements.

By using our essay writing service , you can save time and energy while also learning from our expert writers and improving your own writing skills. We take pride in our work and are dedicated to providing friendly and responsive customer support to ensure your satisfaction with every order. So why struggle with difficult assignments when you can trust our professional writing services to deliver the quality and originality you need? Place your order today and experience the benefits of working with our team of skilled and dedicated writers.

If you need help with any of the STEPS ABOVE

Feel free to use EssayPro Outline Help

What Type Of Language Should Be Used In A Critical Analysis Essay?

How to write a critical analysis essay, what is a critical analysis essay, related articles.

.webp)

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Perspect Clin Res

- v.13(1); Jan-Mar 2022

The critical appraisal of randomized controlled trials published in an Indian journal to assess the quality of reporting: A retrospective, cross-sectional study

Sandeep kumar gupta.

Department of Pharmacology, Heritage Institute of Medical Sciences, Varanasi, Uttar Pradesh, India

Ravi Kant Tiwari

Raj kumar goel, background:.

Although randomized controlled trials (RCTs) are the highest levels of evidence, they might not necessarily be of good quality. Hence, RCTs should always be appraised critically. Critical appraisal is the corroboration of evidence by methodically studying its validity, reliability, and applicability.

The primary objective of this study was to do a critical appraisal of the RCTs published in Indian Journal of Pharmacology (IJP) from 2011 to 2016. The secondary objective was to scrutinize how adequately the published RCTs adhere to the Consolidated Standards of Reporting Trials (CONSORT) declaration.

Materials and Methods:

The present study included all RCTs published as full-text articles in IJP from January 2011 to December 2016. The identified RCTs were critically appraised using the critical appraisal checklist based on CONSORT 2010 guidelines and its extensions.

According to this analysis, 75% (95% confidence interval [CI]: 0.56–0.87) of the articles had given details about the sample size calculation. Nearly 89.29% (95% CI: 0.72–0.96) of the articles described the method for generating random allocation sequence, but only 35.71% (95% CI: 0.20–0.54) of the articles described allocation concealment method. Almost 35.71% (95% CI: 0.20–0.54) of the trials reported results as per the principle of the intention to treat (ITT). Nearly 21.43% (95% CI: 0.10–0.39) of the studies reported CIs in the present study.

Conclusion:

Allocation concealment method, analysis of the data based on the ITT principle, and reporting CIs were found to be underreported in this study. There should be more emphasis on reporting of allocation concealment, ITT analysis, and CI.

INTRODUCTION

One of the most important skills a physician needs in the era of evidence-based medicine is the skill to scrutinize scientific publication critically. Critical appraisal is a systematic way of reading, comprehending, elucidating, and pinpointing the limitations of and determining the adequacy of the results of scientific publications. Critical appraisal weighs up how valid, reliable, and valuable the research will be. It requires thoroughly scrutinizing research evidence to evaluate its validity, results, relevance, impact, and applicability before coming to any conclusion. Critical appraisal encourages sound decision-making based on the best available evidence. It helps us determine how accurate a piece of research is (validity), how genuine the result is (reliability), and how pertinent it is to our patient (applicability).[ 1 ]

In general, randomized controlled trials (RCTs) provide authentic results that could apprise future research or clinical practice.[ 2 , 3 ] However, trials carried out with insufficient methodological approaches are associated with inflated treatment effects.[ 2 ] There is ample evidence in the public domain to prove that the standard of reporting of published RCTs is not optimum.[ 2 , 4 ] The Consolidated Standards of Reporting Trials (CONSORT) statement helps in the comprehensive and unambiguous reporting of trials and facilitates their critical appraisal and analysis.[ 4 ] Clinicians must rely on the scientific publications to keep themselves up-to-date on recent developments on new therapies as well as for new information on old therapies. RCTs might not be necessarily of good quality, hence they should always be appraised critically.[ 1 ] Moreover, very less information is available about the reporting quality of RCTs published in Indian journals.

The primary objective of this study was to do a critical appraisal of the RCTs published in Indian Journal of Pharmacology (IJP) from 2011 to 2016. The secondary objective was to scrutinize how adequately the published RCTs adhere to the CONSORT statement.

MATERIALS AND METHODS

Study design.

This was a cross-sectional and retrospective study. It was based on the critical appraisal and assessment of reporting quality of published RCTs in IJP as per the CONSORT statement. This research relied exclusively on information freely available in the public realm, hence ethical sanction was not required.

Study selection

The present study included all RCTs published as full-text articles in IJP from January 2011 to December 2016.

Eligibility criteria

Inclusion criteria.

Studies were included if they were described within the paper as a RCT or claimed to use random assignment for participants. Studies were only eligible if they were controlled trials with two comparators.

Exclusion criteria

Animal experiments, systematic review/meta-analyses, pharmacoeconomic studies, drug safety studies, pharmacokinetic/pharmacodynamic studies, drug utilization studies, and cross-sectional studies were excluded. Cohort studies and case series were also excluded. RCTs published as short communication and letters to the editor were not included in the present analysis because of brief information and word limitation.

Data extraction

The eligible articles were identified by screening of titles, abstracts, and methodology.

Assessment of reporting quality

The identified RCTs were critically appraised using the CONSORT statement. A critical appraisal checklist was prepared based on the CONSORT 2010 guiding principle and its extensions [ Table 1 ].[ 5 ] The critical appraisal of a RCTs published in IJP with reference to its methodology was done to assess its validity, reliability, and applicability.

Critical appraisal checklist for randomized controlled trials

NA=Not applicable, CI=Confidence interval

Statistical analysis

Descriptive statistics was utilized in this study. The lower and upper bounds of the 95% confidence interval (CI) for the proportions were calculated. The data were analyzed by means of the SPSS (Statistical Package for the Social Sciences), version 16; IBM Corporation, Chicago, Illinois, USA.

A total of 1102 articles published from January 2011 to December 2016 in the IJP were screened for eligibility. The process used to select potentially relevant studies for inclusion in our study is depicted in Figure 1 . From the total of 1102 articles, 596 were omitted after screening of titles and abstracts. A total of 506 studies underwent further evaluation. Of these, 478were excluded after full-text review.

Flow diagram of citations through the retrieval and the screening process

Of the 28 included articles, 21 (75%, 95% CI: 0.56–0.87) mentioned “randomization” in the title. In 28 (100%, 95% CI: 0.87–1) articles, the abstract was structured; these articles described the scientific rationale, gave details of specific objectives or hypotheses, gave a description of trial design, and gave details of eligibility criteria for the participants. Twenty-five (89.29%, 95% CI: 0.72–0.96) articles gave details about setting and locations. Twenty-seven (96.43%, 95% CI: 0.82–0.99) articles defined outcome measures.

Twenty-one (75%, 95% CI: 0.56–0.87) articles mentioned how sample size was determined. Twenty-five (89.29%, 95% CI: 0.72–0.96) articles mentioned about the method for random allocation sequence generation. Ten (35.71%, 95% CI: 0.20–0.54) articles mentioned about allocation concealment method. Sixteen (57.14%, 95% CI: 0.39–0.73) articles were blinded studies. Twenty-eight (100%, 95% CI: 0.87–1) studies described statistical methods for outcome assessment.